In the previous post in this intro series on macOS Incident Repsonse, we looked at collecting device, file and system data. Today, in the second post we’re going to take a look at retrieving data on user activity and behavior.

Why Investigate User Behavior?

There’s a few reasons why we might be interested in user behavior. First, there’s the possibility of either unintentional or malicious insider threats. What has the user been using the device for, what have they accessed and who have they communicated with?

Second, ‘user behavior’ isn’t necessarily restricted to the authorized or designated user (or users), but also covers unauthorized users including remote and local attackers. Who has accounts on the device, when have they been accessed, and do those access times correlate with the pattern of behaviour we would expect to see from the authorized users? These are all questions that we would want to be able to answer.

Third, a lot of confidential and personal user data is stored away in hidden or obscure databases on macOS. While Apple have made some efforts recently to lock these down, many are still scrapable by processes running with the user’s privileges, but not necessarily their knowledge. By looking at these databases, what they contain, and when they were accessed, we can get a sense of what data the company might have lost in an attack, from everything from personal communications, to contacts, web history, notes, notifications and more.

A Quick Review of SQLite

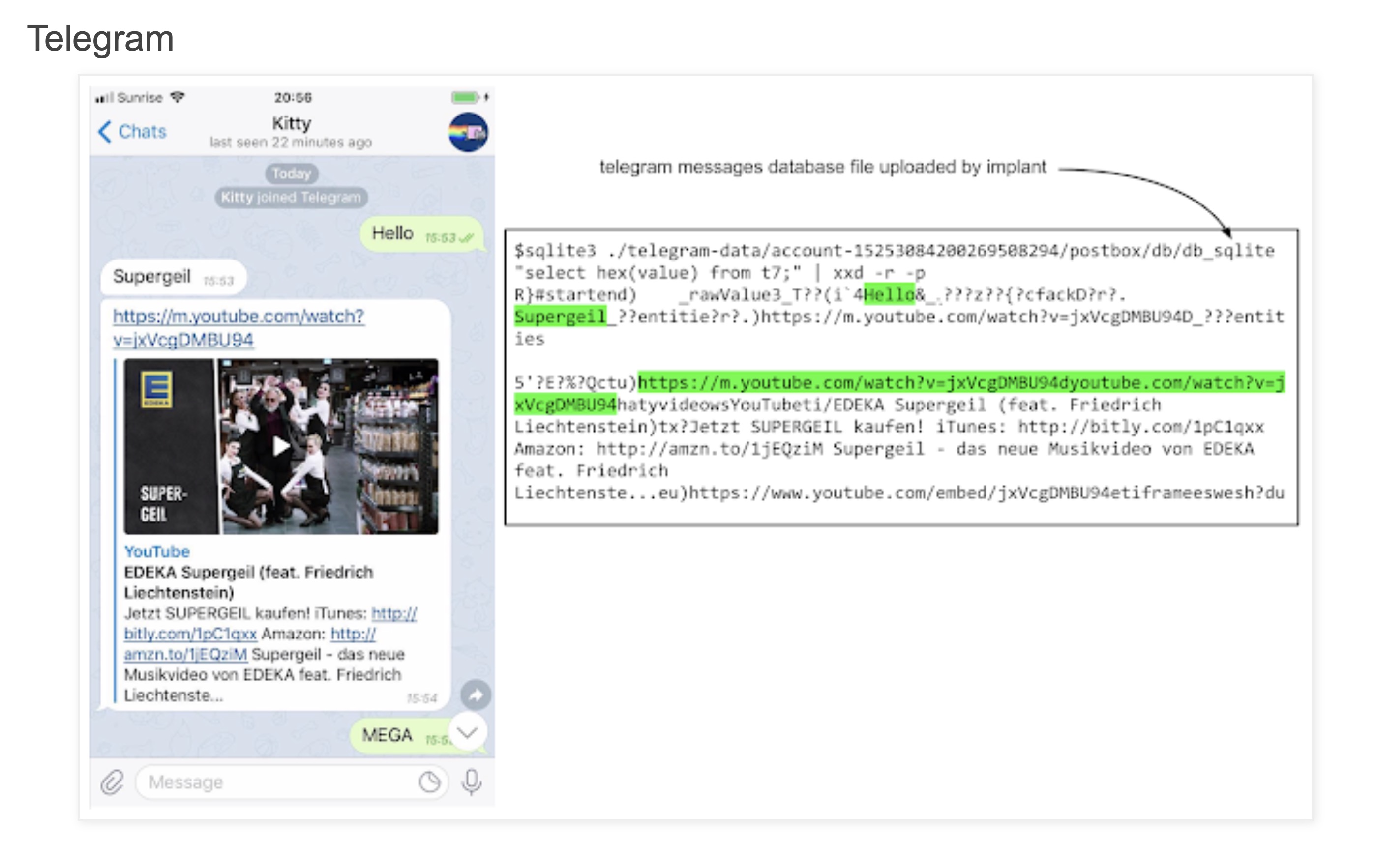

Although some data we will come across is in Apple’s property plist format and less occassionally plain text files, most of the data we’re interested in is saved in sqlite databases. I am certainly no expert with SQL, but we can very quickly extract interesting data with a few simple commands and utilities. You can use the free DB Browser for SQLite if you want a GUI front end, or you can use the command line. I tend to use the command line for quick, broad-brush looks at what a database contains and turn to the SQLite Browser if I really want to dig deep and run fine-grained queries. Here are some very basic commands that serve me well.

sqlite3 /path to db/ .dump

This is my go-to command, which just pumps out everything in one (potentially huge) flood of data. It’s a great way to quickly look at what kind of info the database might contain. You can grep that output, too, if you’re looking for specific kinds of things like filepaths, email or URL addresses, and piping the output to a plain text file can make it easy to save and review if you don’t want to work directly on the command line all the time.

sqlite3 /path to db/ .tables

Tables gives you a sense of the different kinds of data stored and which might be most interesting to look at in detail.

sqlite3 /path to db/ 'select * from [tablename]'

Another one of my go-to commands, this is equivalent of doing a “dump” on a specific table.

sqlite3 /path to db/ .schema

This command is essential to understand the structure of the tables in the database. The .schema command allows you to understand what columns each table contains and what kind of data they hold. We’ll look at an example of doing this below.

Finding Interesting Data on macOS

There’s a few challenges when investigating user activity on the Mac, and the first is actually finding the databases of interest. Aside from the fact that they are littered all over the user and system folders, they can also move around from one version of macOS to another and have also been known to change structure from time to time.

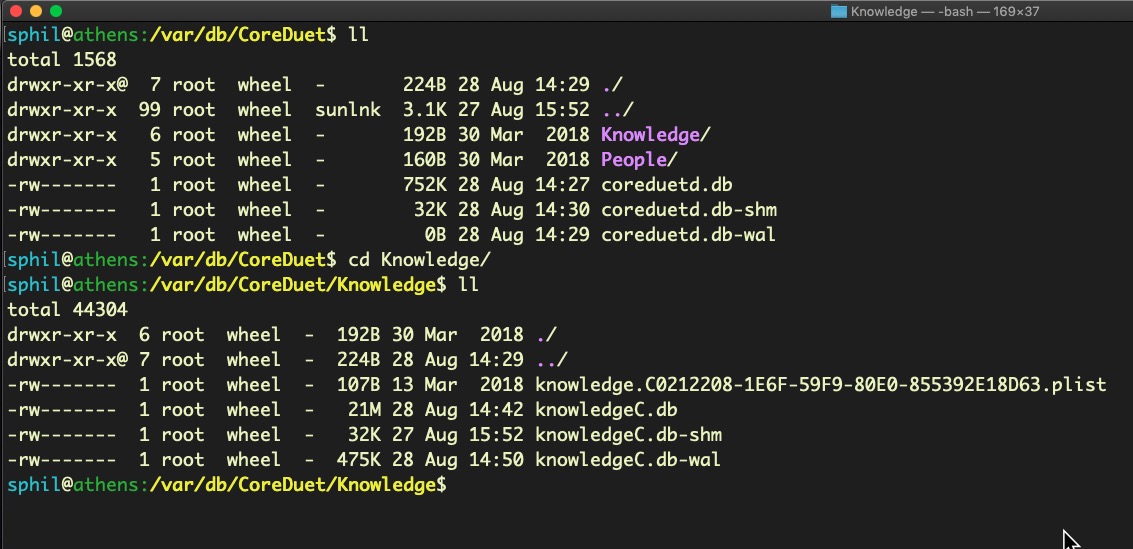

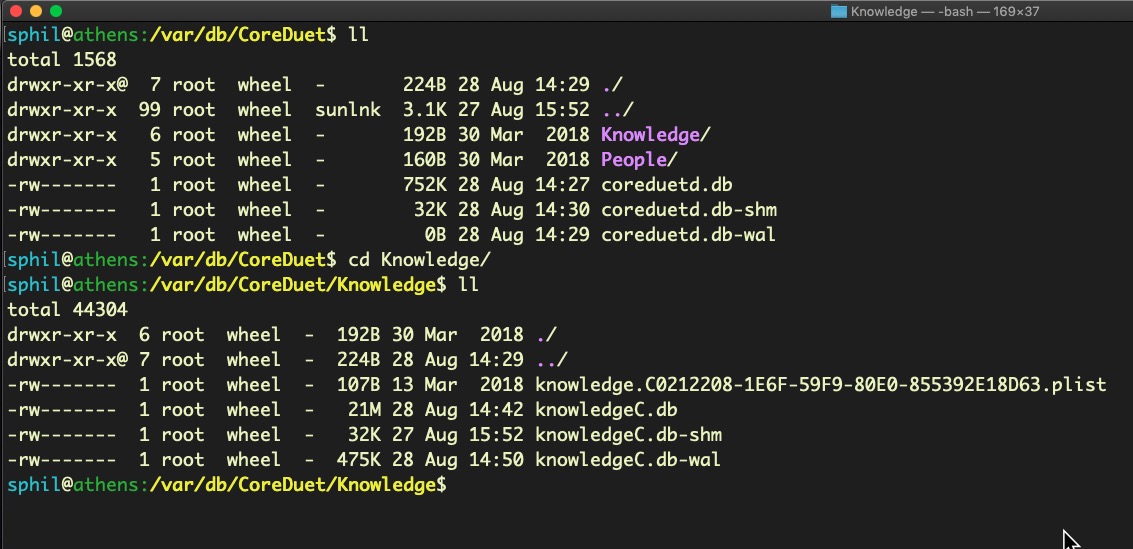

In the last post, when we played with sysdiagnose, you may recall that one location the utility scraped logs from was /var/db. There’s user data in there, too. For example, in CoreDuet, you may find the Knowledge/knowledgeC.db and the People/interactionC.db. SANS macOS forensics instructor Sarah Edwards did a great post on mining the knowledgeC.db which I highly recommend. From it, you will be able to discern a great deal of information about the user’s Application usage and activity, browser habits and more. Some of this information we’ll also gather from other sources below, but the more corroborating evidence you can gather to base your interpretations on the better.

The interactionC.db may give you insight into the user’s email activity, something we will return to later in this post.

In the meantime, let’s use this database for a simple example of how we can interpret the SQL databases in general. Start by changing directory to

/private/var/db/CoreDuet/People

and listing the contents with ls -al. You should see interactionC.db in the listing.

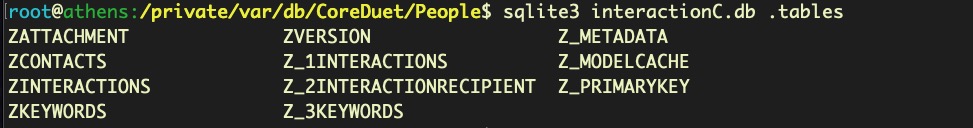

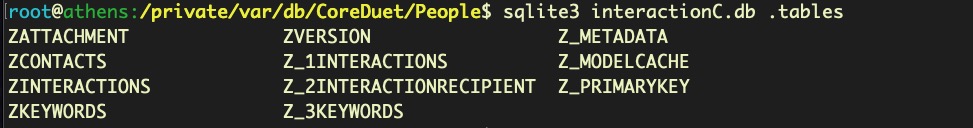

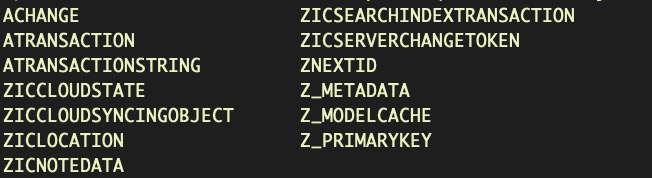

If we run .tables on this database, we can see it contains some interesting looking items.

Let’s dump everything from the ZCONTACTS table and have a look at the data. Each line has a form like this:

3|2|31|0|0|17|0|0|2|583116432.847281||583091780||0|588622185|||donotreply@apple.com

|donotreply@apple.com|

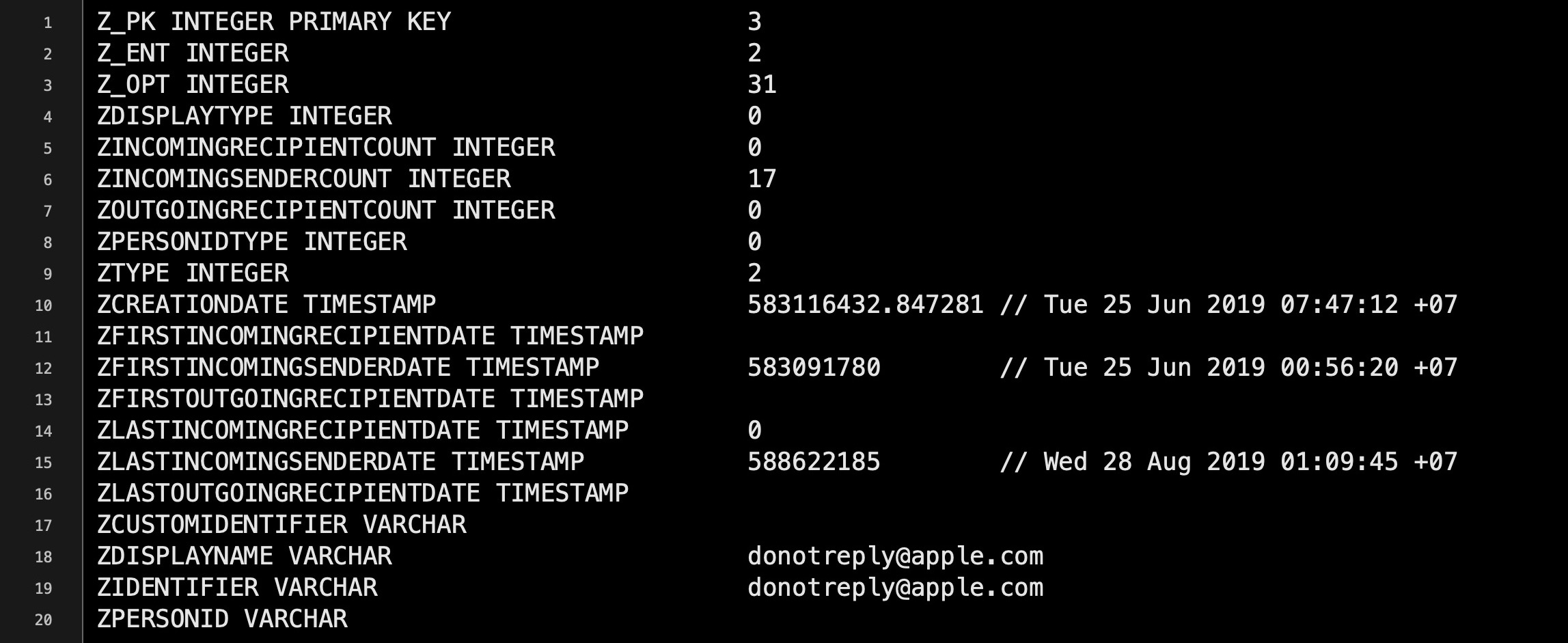

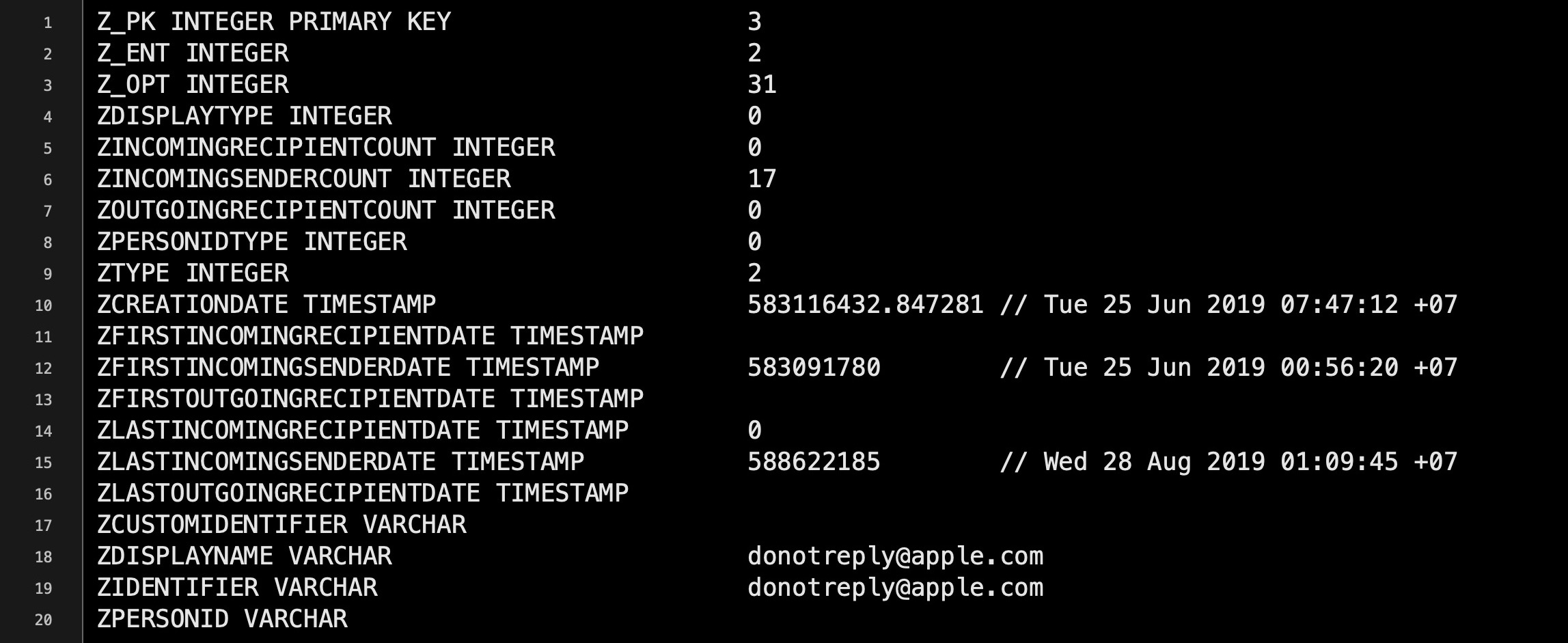

Sure, we can see the email address in plain text, but what does the rest of the data mean? This is where .schema helps us out. After running the .schema command, look for the CREATE TABLE ZCONTACTS schema in the output.

Each line tells us the column name and the kind of data the ZCONTACTS table accepts (eg. Z_OPT column takes integers). There are 20 possible colums in this table, and we can match those up with each column from data extracted from the table earlier, where each column in that output is separated by a |. Here we also used the method of converting Cocoa timestamps to human-readable dates that we discussed in Part One .

The data indicates that between 25 June and 28 August the recipient received 17 messages from the email address identified in fields 18 and 19.

However, a word of caution about interpretation. Until you are very familiar with how a given database is populated (and depopulated) over time, do not jump to conclusions about what you think it’s telling you. Could there have been more or less than 17 messages during that time? Unless you know what criteria the underlying process uses for including or removing a record in its database, that’s very difficult to say for sure. In similar vein, note that the timestamps may not always be reliable either. You cannot assume that a single database is sufficient to establish a particular conclusion. That’s why corroborating evidence from other databases and other activity is essential. What we are looking at with these sources of data are indications of particular activity rather than cast-iron proof of it.

Databases in the User Library

A great deal of user data is held in various directories with the ~/Library folder. The following code will pump out an exhaustive list .db files that can be accessed as the current user (try with sudo to see what extras you can get).

cd ~/Library/Application Support; find . -type f -name "*.db" 2> /dev/null

However, here’s another difficulty if you’re working on Mojave or later. Since Apple brought in enhanced user protections, you may find some files off limits even with sudo. To get around that, you could try taking a trip to the System Preferences.app and adding the Terminal to Full Disk Access. That’s assuming, of course, that there are no concerns about ‘contaminating’ a device with your own activity.

Dumping a list of all the possible databases might look daunting, but here’s just a few of the more interesting Apple ones you might want to look at on top of those associated with 3rd party software, email clients, browsers and so on.

./Application Support/Knowledge/knowledgeC.db

./Application Support/com.apple.TCC/TCC.db

./Containers/com.apple.Notes/Data/Library/Caches/com.apple.Notes/Cache.db

./Mail/../

./Messages/chat.db

./Safari/History.db

./Suggestions/snippets.db

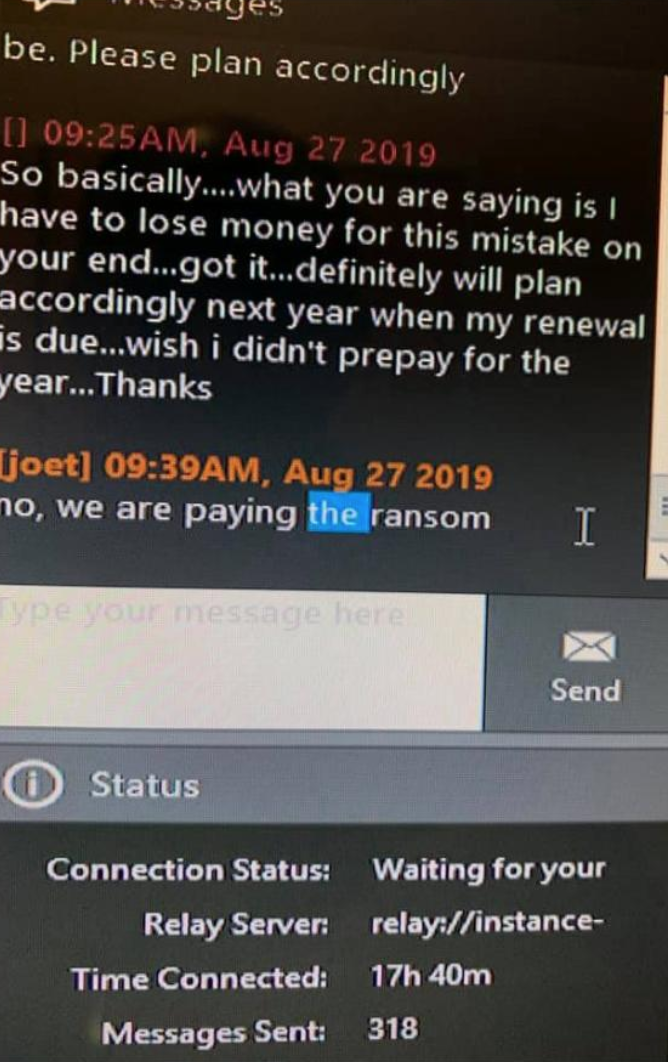

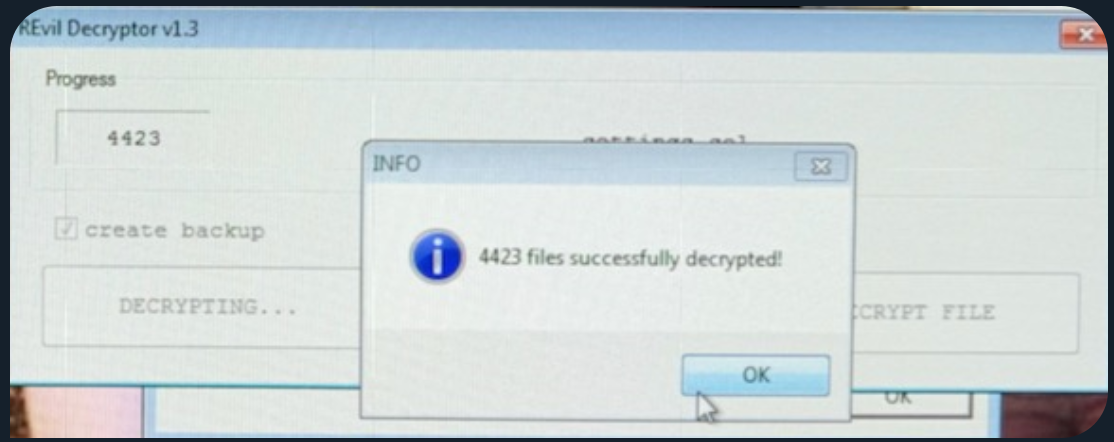

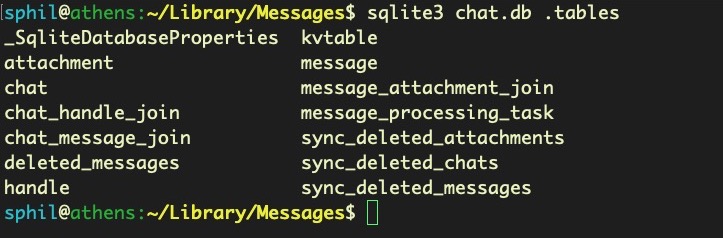

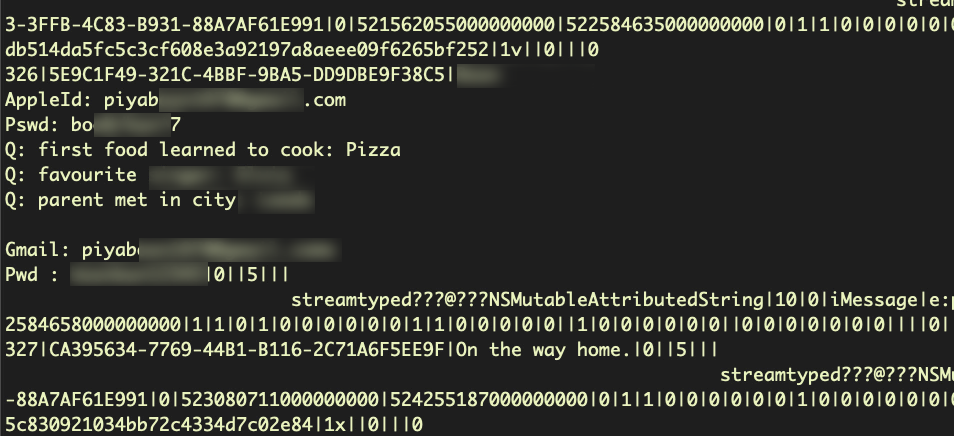

Let’s look at a few examples. Surprisingly, the Messages’ chat.db is entirely unprotected, so you can dump messages in plain text. You might be surprised to find just how unguarded people can be on informal chat platforms like this.

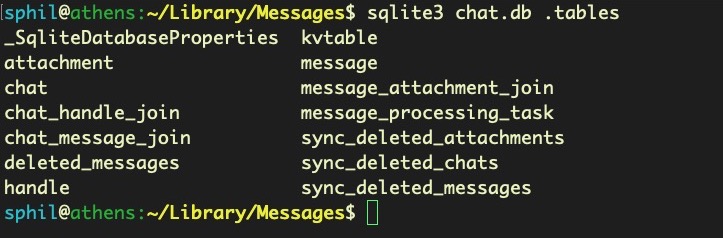

sqlite3 chat.db .tables

This user has basically left themselves open to compromise from any process running under their own user name.

sqlite3 chat.db 'select * from message'

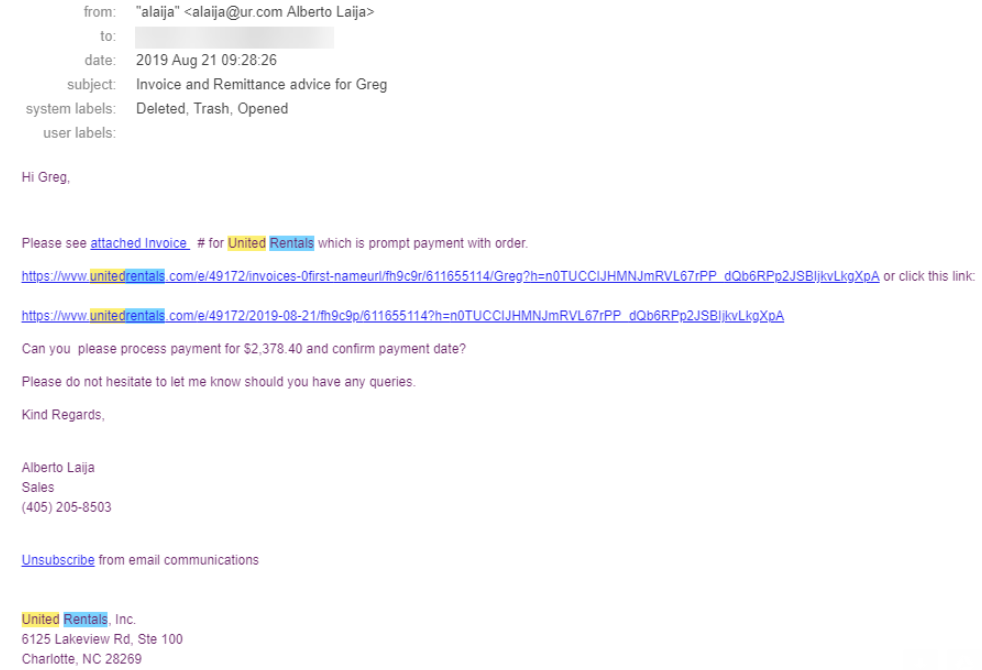

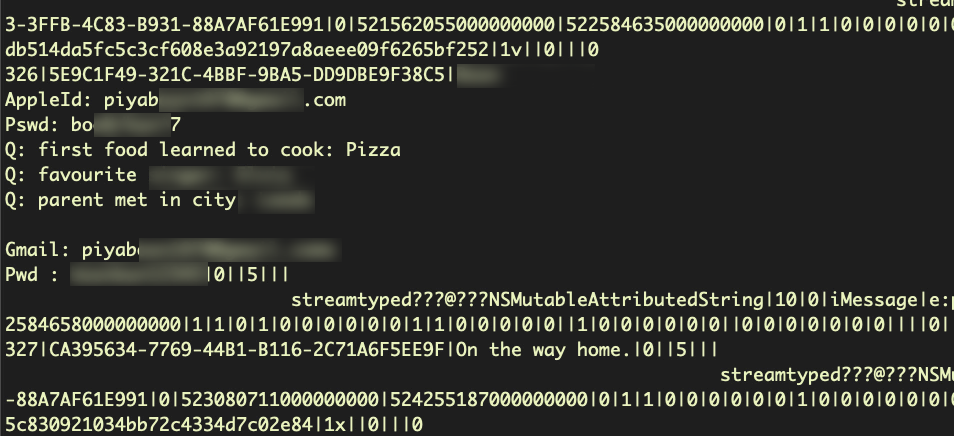

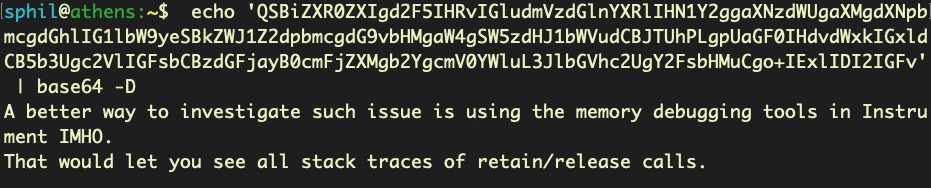

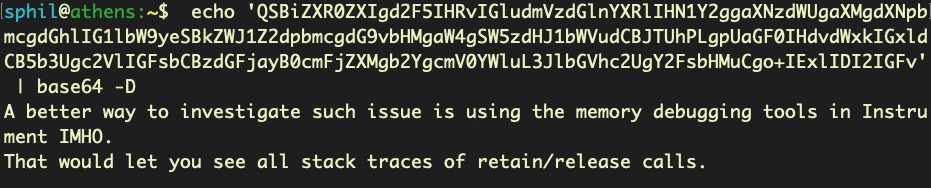

Mail is also completely readable once you dig down through the hierarchy of folders. Here the messages are not stored in a sqlite database, but use the .emlx format. These encode the email content in base64, which can easily be extracted and decoded.

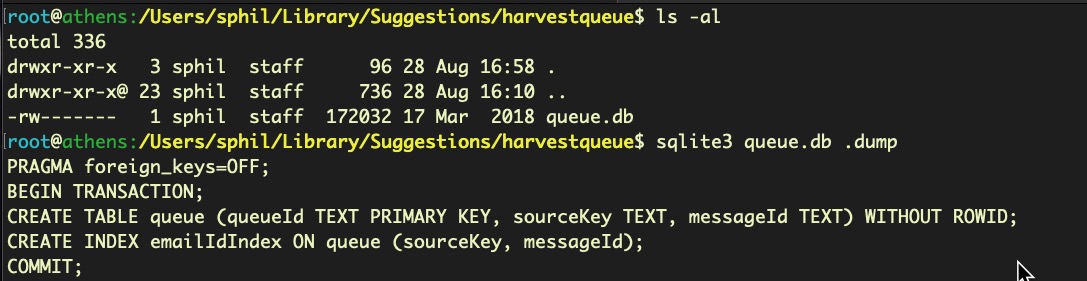

You can save yourself a lot of time with emails by reading the snippets.db in the Suggestions folder. This contains databases that are meant to speed up predictive suggestions by the OS in application searches (Contacts, Mail, etc), as well as Spotlight and the browser address bar. The snippets.db contains snippets of email conversations and contact information.

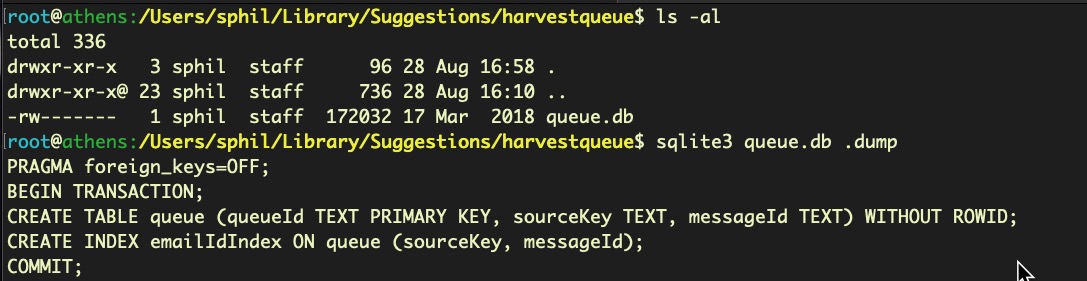

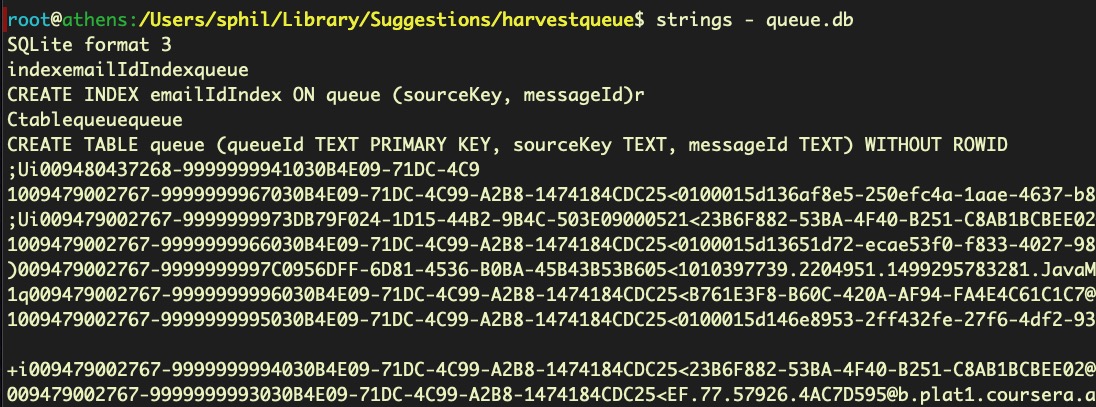

Sometimes you’ll get silent ‘permission denied’ issues on these databases, even when using root and Terminal has Full Disk Access. For example, in the image below, the file size of the queue.db clearly indicates that there’s more data in there than I seem to be getting from sqlite.

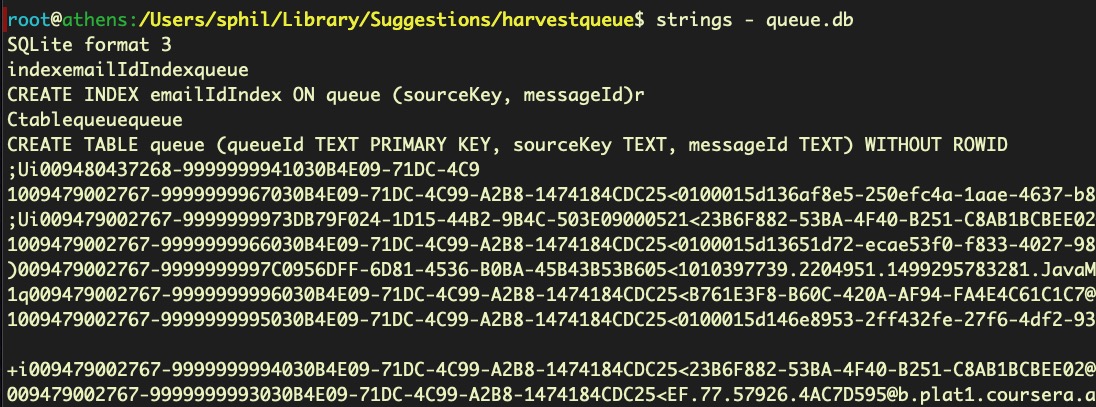

When these kind of things happen, a ‘quick and dirty’ solution can be to turn to either the strings command or the xxd utility to quickly dump out the raw ASCII text and see if the contents are worthy of interest.

Mining the Darwin_User_Dir for Data

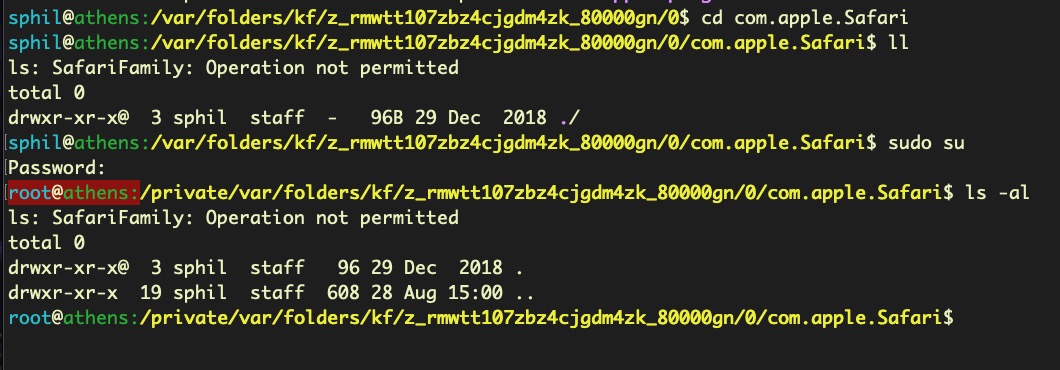

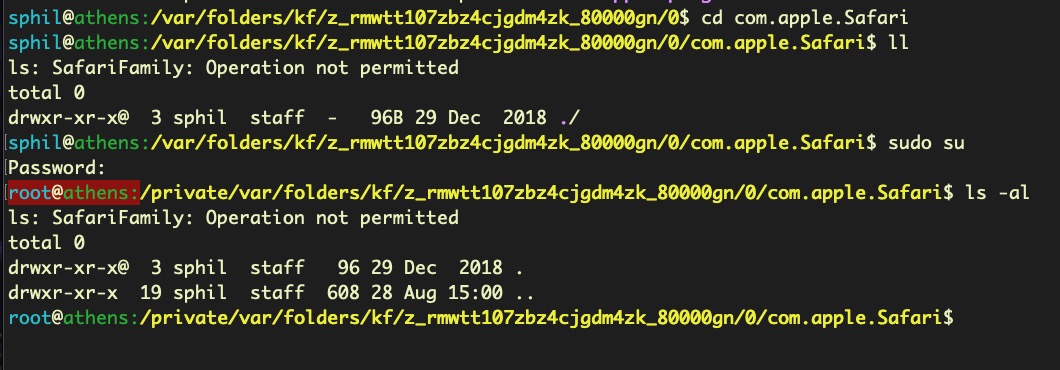

Apple hide some databases in an obscure folder in /var/folders/. When logged in as a given user, you can leverage the DARWIN_USER_DIR environment variable to get there.

cd $(getconf DARWIN_USER_DIR)

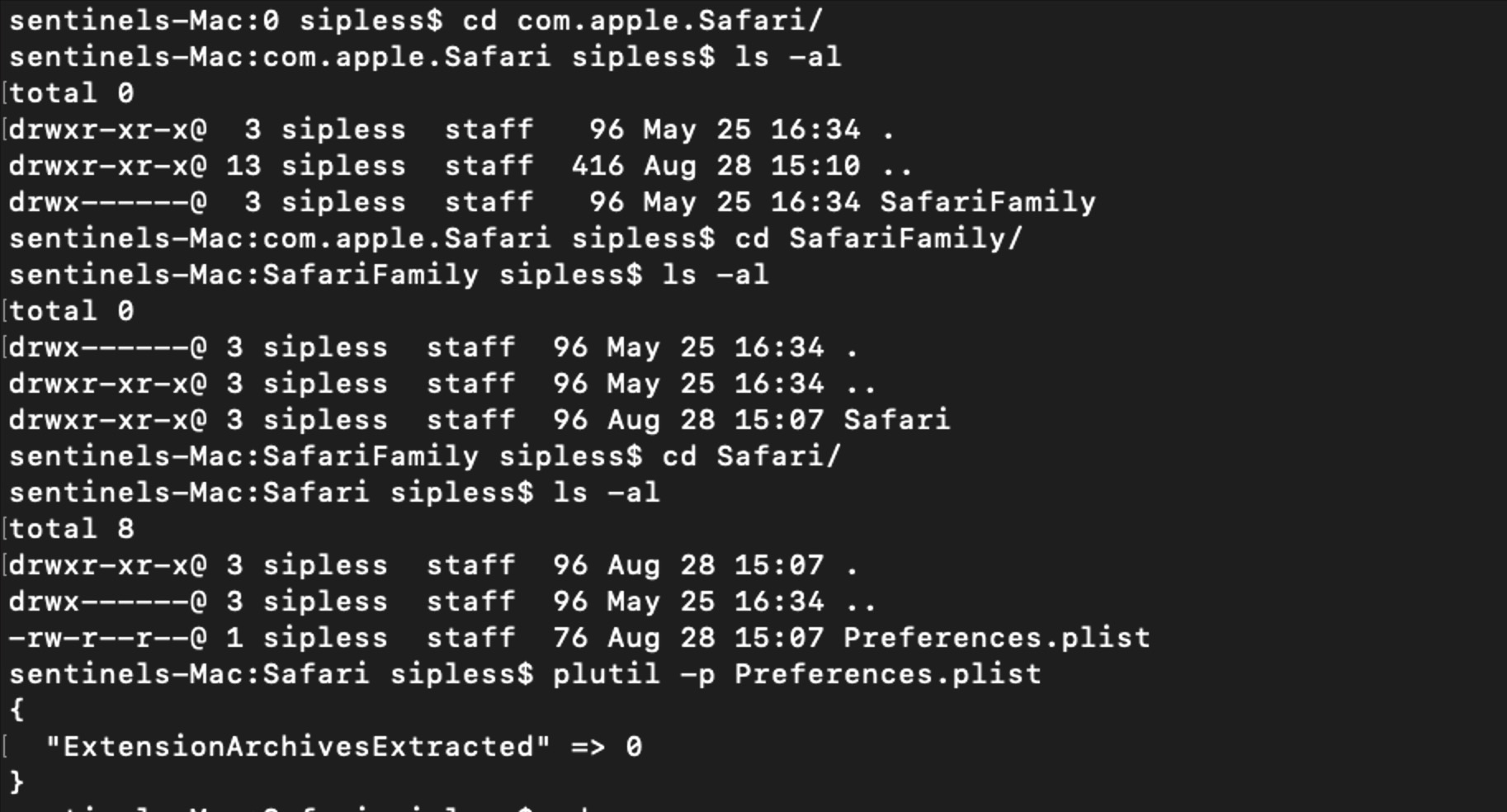

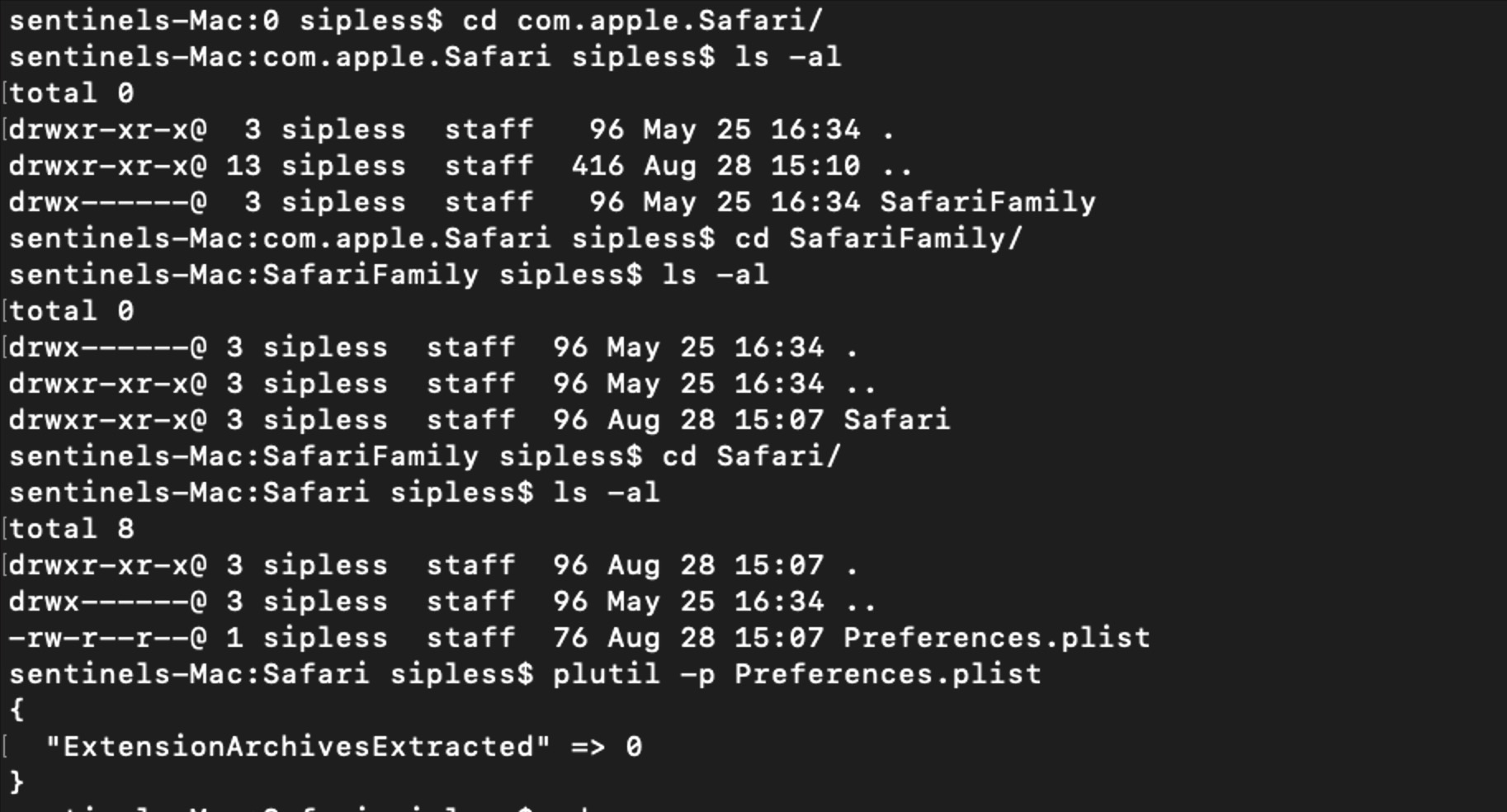

Again, you may find even with Terminal added to Full Disk Access, some directories will remain off limits, even for the root user, like the SafariFamily folder appears to be.

In this case, we can’t even dump the strings because we cannot even get permission to list the file.

The only way to get access to these kinds of protected places is to turn off System Integrity Protection, which may or may not be something you are able to do, depending on the case.

Reading User Notifications, Blobs & Plists

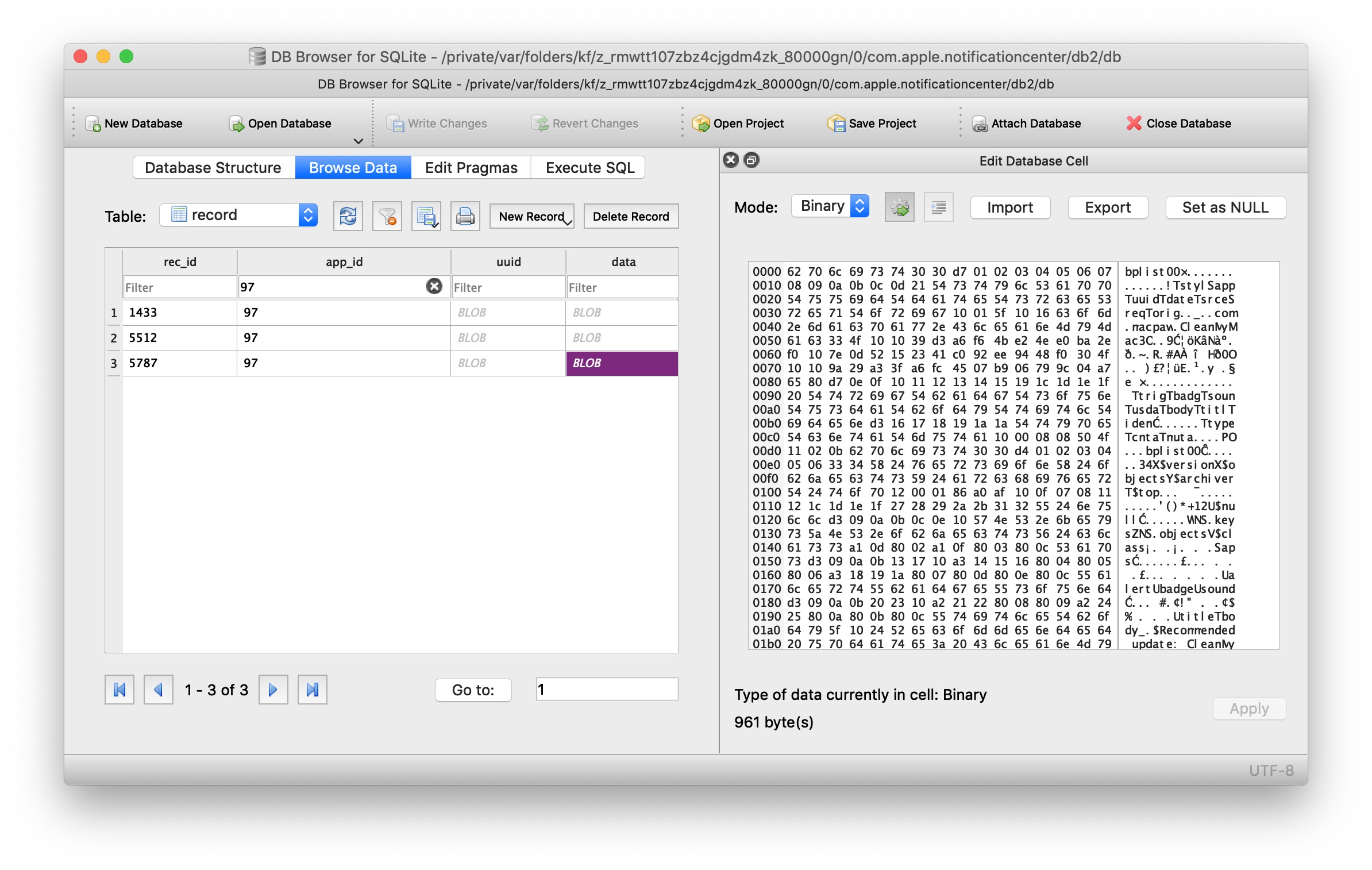

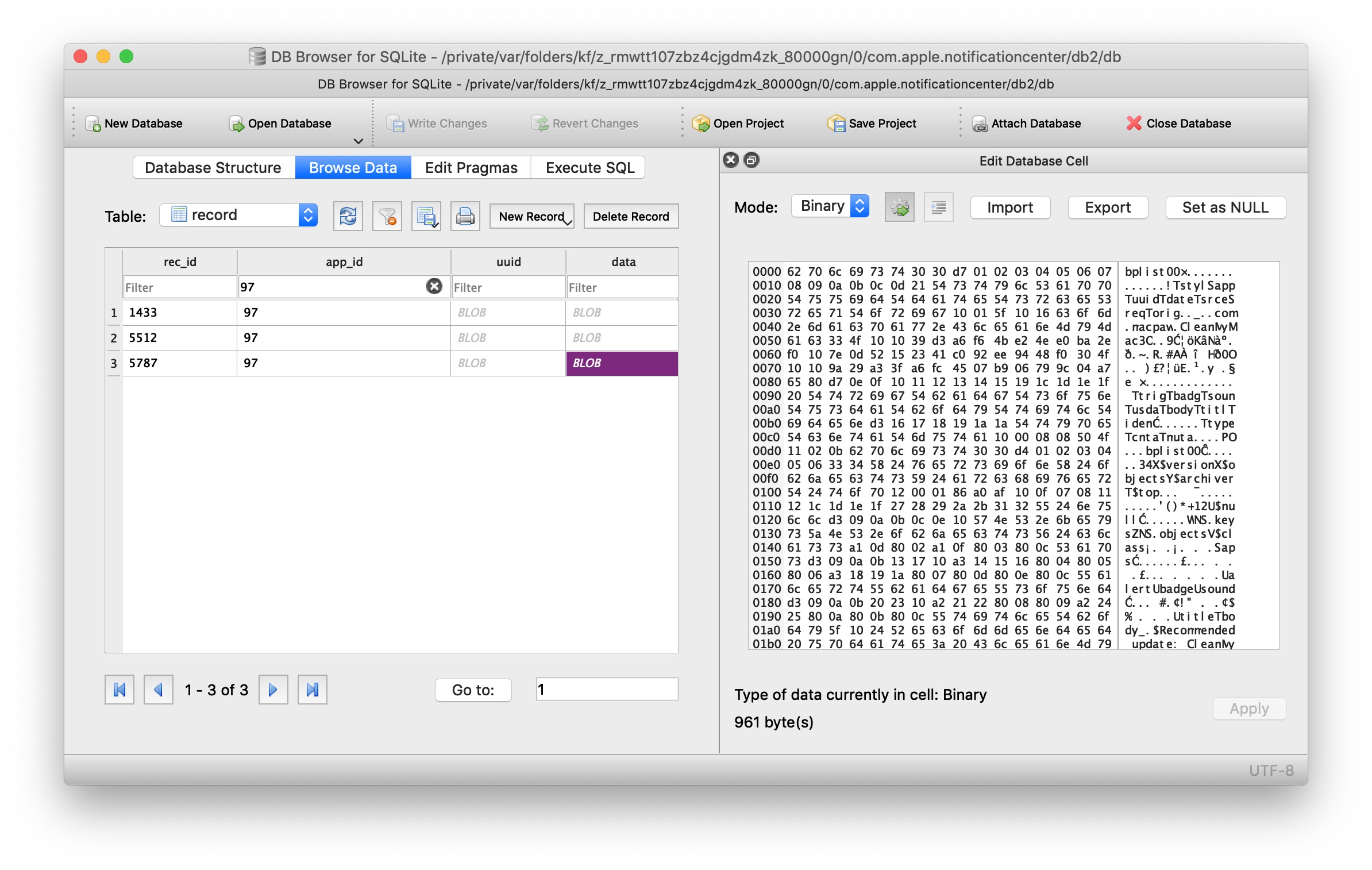

One of the databases you’ll find in the folder that the variable DARWIN_USER_DIR takes you to is the database that stores data from Notifications – messages sent from Applications like Mail, Slack and so on to the Notification Center and which appear as alerts and banners in the top right of the screen. Fortunately or unfortunately, depending on how you look at it, we don’t need special permissions to read this database.

If you’re logged in as the user whose Notifications you want to look at, the following command will take you to the directory where the sqlite database is located.

cd $(getconf DARWIN_USER_DIR)/com.apple.notificationcenter/

The Notifications database has changed at some point in time, and if you list the contents of the directory you may see both a db and a db2 folder. Change directory into each in turn and run the .tables and .schema commands to compare the different structures. Both use blobs for the data, so you will need a couple of tricks to learn how to read these.

One way is to open the database in DB Browser for SQLite, click on the blob data and view the source in binary format. You can export that source as blob.bin and then use plutil -p blob.bin to output it in nice human-readable text.

I’m usually in too much of a hurry to do all that. Instead, I’ll do something like

sqlite3 db 'select * from app_info'

to browse through the list of apps that have sent Notifications, then run a few greps on the entire database. For example, if I want to read Slack notifications, I can use something like this:

strings $(getconf DARWIN_USER_DIR)/com.apple.notificationcenter/db2/db | grep -i -A4 slack

And of course I can just change ‘slack’ for ‘mail’ or whatever else looks interesting. I might then use the previous method with the DB Browser and plutil mentioned above to dig deeper.

Reading Data from Notes, More Blob Tricks

There’s plenty of application databases that we haven’t touched on, but one that I want to cover in this overview is Apple’s Notes. Not only might this be a good source of information about user activity, it’s also trickier to deal with than the other databases we’ve looked at.

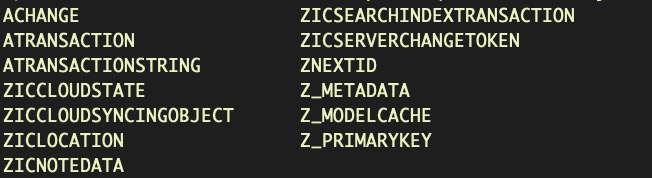

We can find the Notes database in the ~/Library/Group Containers folder. Let’s quickly review the tables:

sqlite3 ~/Library/Group Containers/group.com.apple.notes/NoteStore.sqlite .tables

This somewhat byzantine-looking one-liner will dump all the user’s notes to stdout.

for i in $(sqlite3 ~/Library/Group Containers/group.com.apple.notes/NoteStore.sqlite "select Z_PK from ZICNOTEDATA;"); do sqlite3 ~/Library/Group Containers/group.com.apple.notes/NoteStore.sqlite "select writefile('body1.gz.z', ZDATA) from ZICNOTEDATA where Z_PK = '$i';"; zcat body1.gz.Z ; done

Let’s take a look at how it works. The first part selects Z_PK column – the primary keys or unique identifiers of the notes in the database – and then iterates over each one. The second part takes the primary key and for each note in the ZICNOTEDATA table, it extracts the ZDATA blob containing the note’s content. Next, writefile writes the blob to a temporary compressed file body1.gz.z and finally zcat decompresses it into plain text!

Finding Other Data Stores

If you are interested in a particular application or process but do not know what it uses for a backing store, if anything, there’s a couple of investigative methods you can try. First, see if the process is running in Activity Monitor. If it is, click the Info button and select the ‘Open Files and Ports’ tab and see where it’s writing to. You could also do the same thing with lsof on the command line.

If that doesn’t work, try running strings on the executable file and grepping for / to search for paths that the program might write to. If you’re still out of luck you may have to do a little more macOS reverse engineering to understand what the program is up to and find where it hides its data.

Conclusion

In this post, we’ve taken a tour of various places where macOS stores data on user activity and user behavior and reviewed some of the main ways that you can locate and extract this data for analysis. From Part 1 and Part 2 we have collected data on the device and on user(s) activity. But we also need to look at our device for evidence of manipulation by an attacker that can leave the system vulnerable to future exploitation. We’ll turn to that in the final part of the series. I hope to see you there!

Like this article? Follow us on LinkedIn, Twitter, YouTube or Facebook to see the content we post.

Read more about Cyber Security